Orion ST80 GSO Focuser Upgrade

Apologies for the quality, I just shot these on my phone.

About twelve years ago when I had started to recover from graduate school burnout my wife bought me an Orion ST80-A as a way to get me back into astronomy. At the time just the optical tube was under $200 and it was quite the bargain for an ultra portable telescope. I had wanted one back in middle school in the 90s when they came out and to this day I still do like a refractor despite their price and impracticality.

Since then I've accumulated a few other telescopes and this one sites idle most of the time. The one exception is solar viewing since I have a white light filter for this diameter scope. Plus I tend to do solar viewing in outreach type scenarios so having the smaller scope is nice for stowing in my office or leaving in the car. It also doesn't need much of a mount and I can usually get away with my larger photo tripod along with a Wimberly style gimbal head I use for larger telephoto lenses.

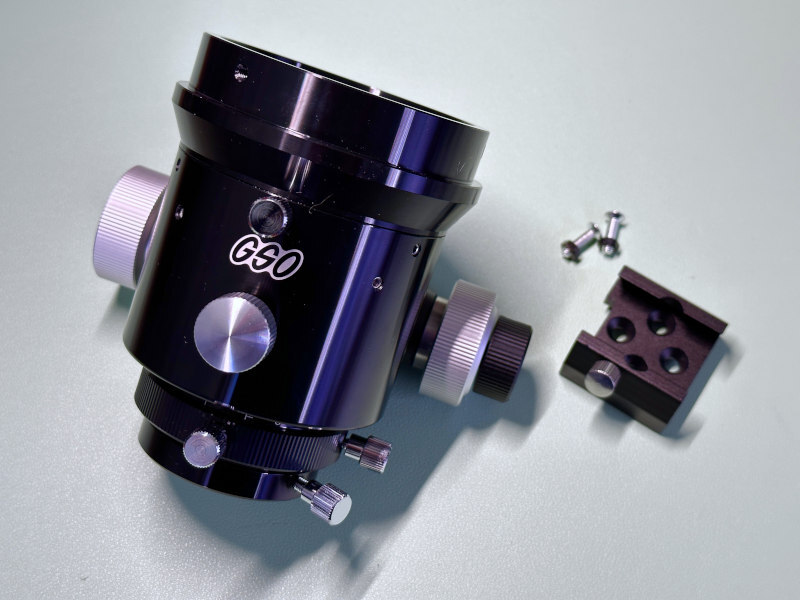

The most disappointing thing about using this telescope has usually been the focuser. It's a single speed rack and pinion that isn't the smoothest brand new and now with a little over a decade it's only gotten stiffer and less fun to use. Fortunately GSO makes a two speed linear Crayford focuser that is a direct fit replacement for the original. For the ST80 and it's mini variants, the same telescope was sold under many brands including Orion and Celestron, you'll want the 86mm version of the focuser. These days due to inflation and whatnot sadly these aftermarket focusers have creeped up a lot in price, to the point where they almost cost more than the telescope itself. Still for such a quality of life upgrade I think it's worth it.

As a bonus this is alost a rotating focuser so you can change the angle of the eyepiece. That's almost worth the cost of admission on its own. The GSO focuser is a bit heavier than the stock version and you'll need to buy a mounting shoe if you want to keep using the stock finder but those are about then only downsides. The stock focuser only accepts 1.25" eyepieces and the GSO will take 2" parts. With this scope I'm not sure that's really needed or wise, but it does make it easier if I ever want to do imaging through it since most of my parts for that are based on the 2" standard. I have done imaging with the ST80 before and being a lower priced archomat designed in the 90s it's what I'd call serviceable at lower magnifications and lower contrast objects.

I honestly think this is a worth while upgrade if you've got one version or the other of the ST80 laying around. It improves the usability quite a bit and that's probably 90% of what makes a telescope good in my book. I was looking at swapping out the grease on the old rack and pinion focuser which is a bit of a chore. But with a Crayford working off friction that is no longer an issue either.

Maybe the prices on the aftermarket parts will come down at some point or you can wait for a sale. I was trying to target using this for the eclipse but between cloud cover and a last minute illness I wasn't really able to get out and put it to use. Hopefully once the weather clears I'll be able to use it a little more, now to look on the used market for hydrogen alpha telescopes people are trying to pawn off after the eclipse!

Darktable vs Camera JPG: why are the colors different?

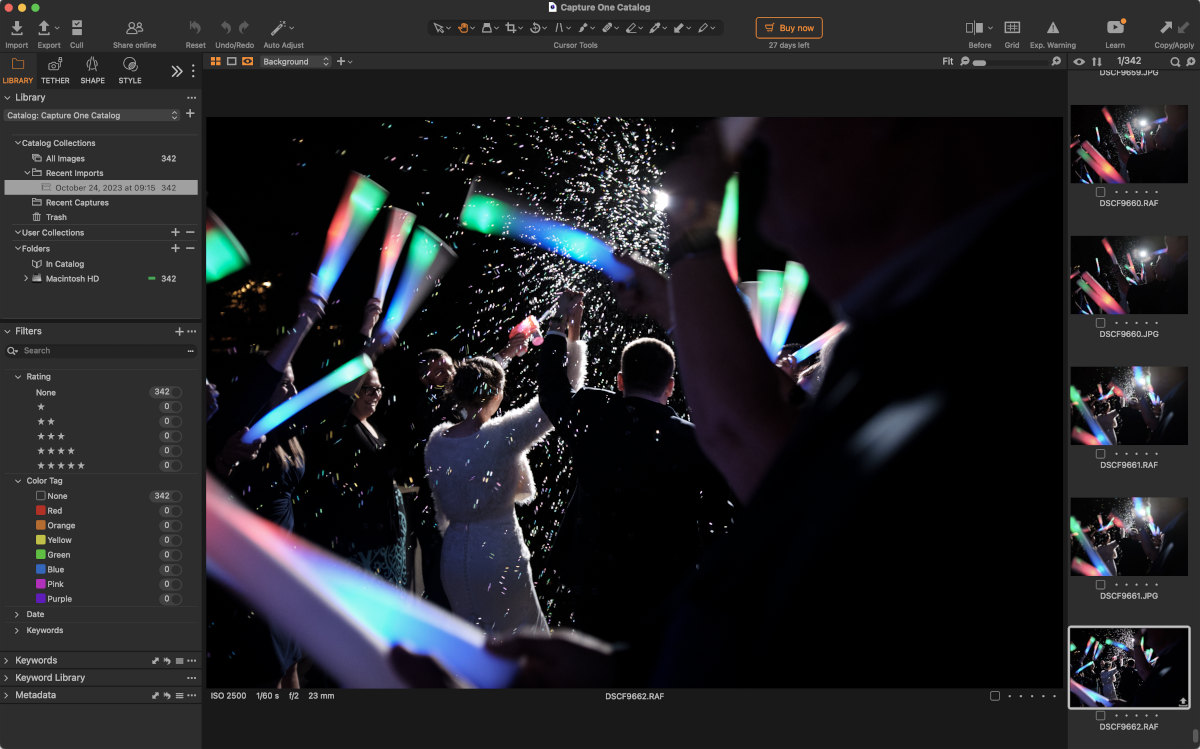

Straight out of camera JPG, Fuji X100F

Color is weird, it only really exists in our heads and there has been many tomes written about how to represent it and how to process it, especially in digital imaging. Something that seems to come up often with RAW photo processors in general and Darktable in particular is around why the colors end up looking so different compared to what the camera produces in a JPG.

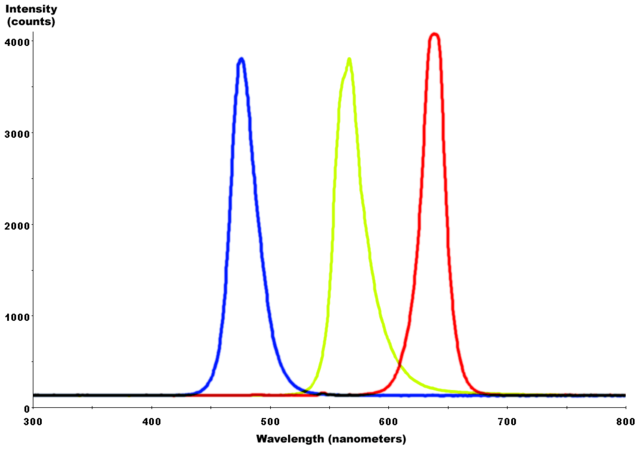

Above is a JPG straight out of my Fuji X100F on the Classic Chrome setting. It looks nice, but there's a problem brewing. Looking at the LED light sticks one notices a very cyan or teal colored center in the blue LED section. These were very cheap LED light sticks and contained one red, one blue and one green LED as far as I could tell. LEDs are generally a very narrow spectrum light source too, individual LEDs only produce a very specific wavelength of the visible spectrum so it's impossible for this single blue LED to produce teal or cyan. White LEDs can be made by mixing several different color LEDs together behind a diffuser or with a UV LED and a flourescent coating. But the regular low cost LEDs in applications like these are most definitely not those, so where's that cyan coming from?

Typical spectral output of blue, green and red LEDs respectively. Notice how narrow the spectral bandwidth and how high the peaks are.

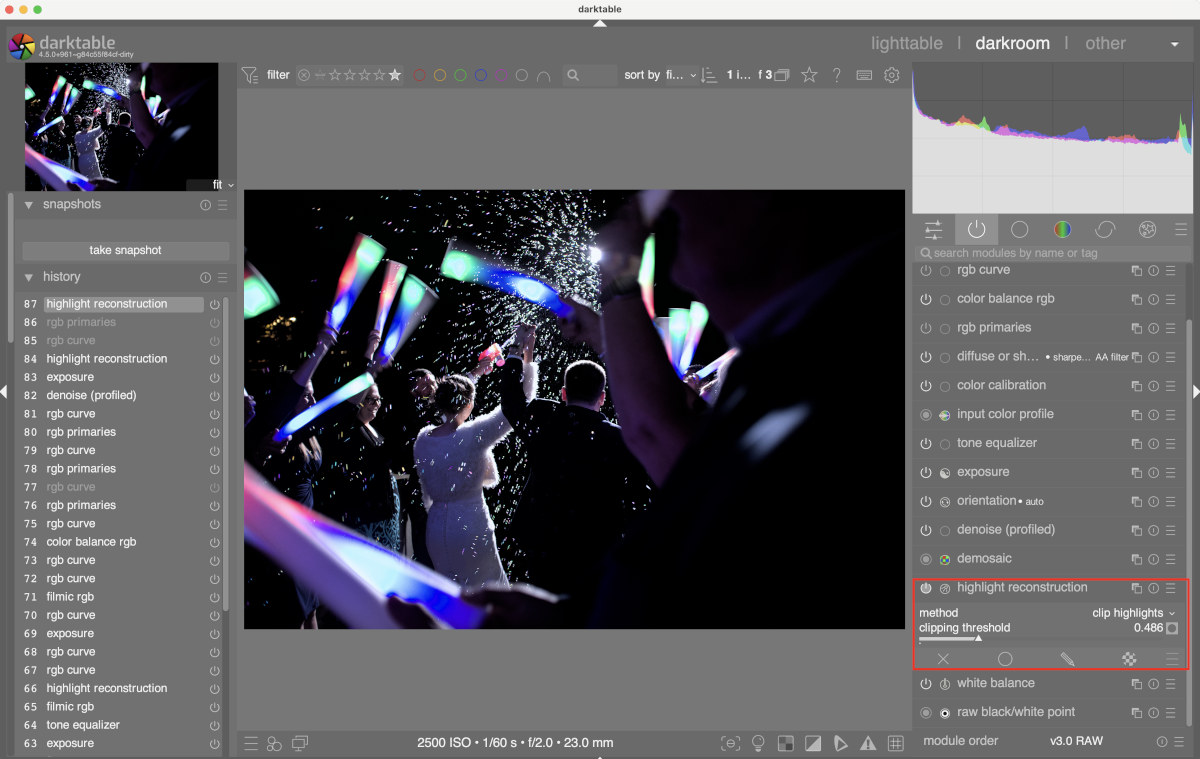

Things only get more confusing once the RAW file is loaded into Darktable.

Default Darktable 4.4 processing, Filmic v7

Gone are the teals and cyans but now now there's this harsh blue gradient. Frankly it looks like garbage. "Good grief, hoodwinked again, I guess you get what your pay for, it's free so it can't be good" one might think. Except that in this case Darktable is showing you the more technically correct representation.

If one were to think about the color property of shade and draw out dark shades of a blue to a light shade of blue it might look something like below. As the color lightens up it get progressively closer to white until it falls into pure white. There's not a pixel of cyan or teal in that strip of color below. Those are different hues and cannot fall out of a purely blue light source by just increasing the exposure.

Loading the file into Capture One, Fuji's RAW processor of choice, yields very similar results to the Fuji JPG too.

Yes, I just used the trial version.

Considering Fuji apparently works with Capture One and probably shares information about their curves and JPG processing I would expect it to closely match. I don't have an Adobe subscription so I cannot test that, from what I can tell they don't allow Adobe Camera RAW to be used standalone anymore either.

So, does this mean that the professional software and thousand dollar camera is lying to you? Well, possibly, it depends on how you look at it but Fuji have certainly made some decisions for you in their processing. What they are doing is clipping the blue channel and causing a hue shift or hue rotation.

This is all well and good but technically correct and ugly is still ugly. I don't disagree, the Fuji JPG output is more pleasing in my opinion as I find the jarring, harsh blue gradient to be distracting. So let's fix it in Darktable.

First, find the highlight reconstruction module and change the mode from inpaint opposed to clip highlights, then adjust the clipping threshold down until the blue highlights begin to turn cyan. I found a value of about 0.4-0.5 worked well.

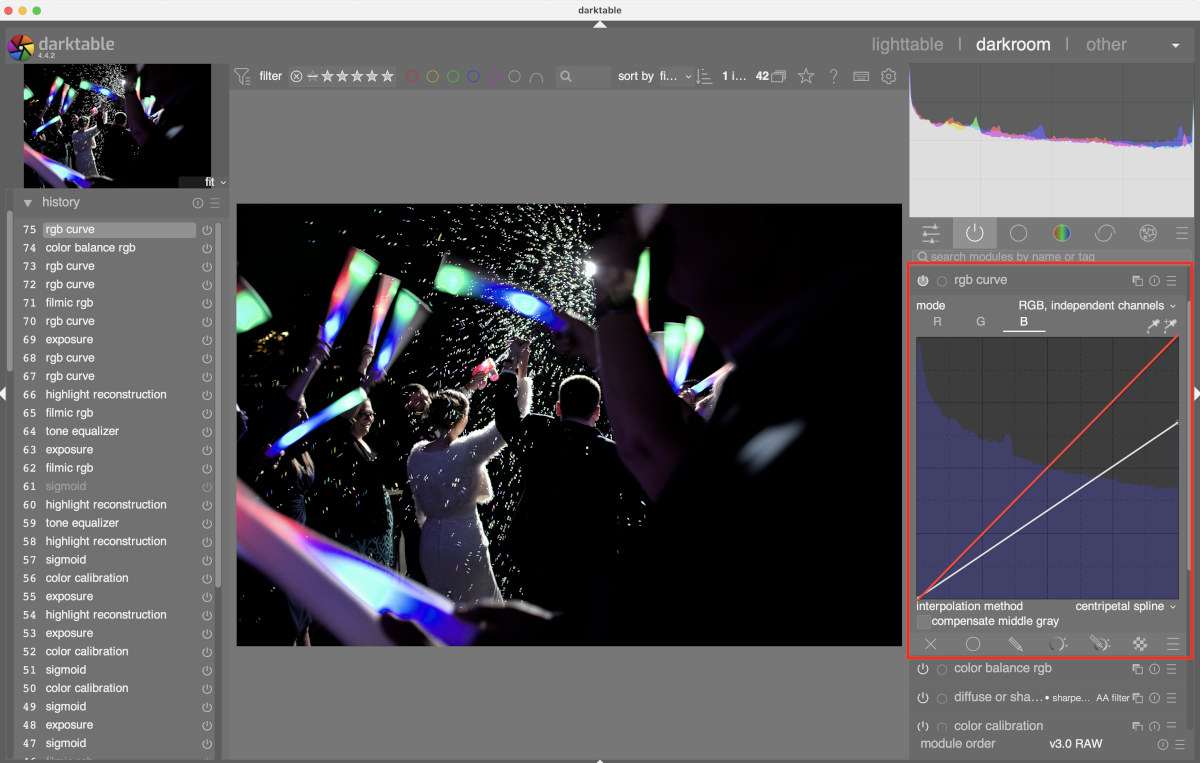

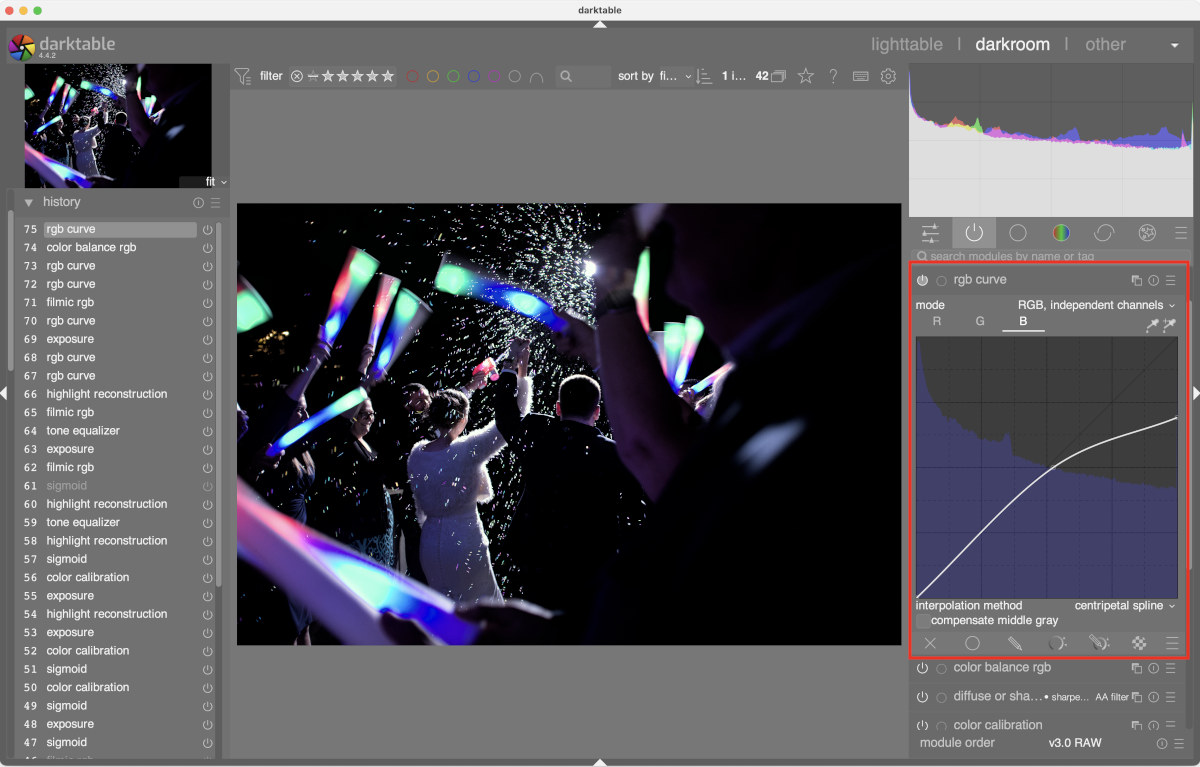

Next, find the rgb curve module and change the mode to RGB, independent channels, click on the blue channel and drag the right end of the curve down the right hand side of the module window. In this screen shot the red line represents the standard linear fit that the module starts out with. Everything between that and the white line that is pulled down has now been clipped from the image. The desired effect is achieved when the cyan or teal starts to intensify.

Then even out the curve by adding a few more points so that the gradients are smoother and less jarring. This is most noticeable in the darker parts of the cyan to blue gradient but it's still very subtle.

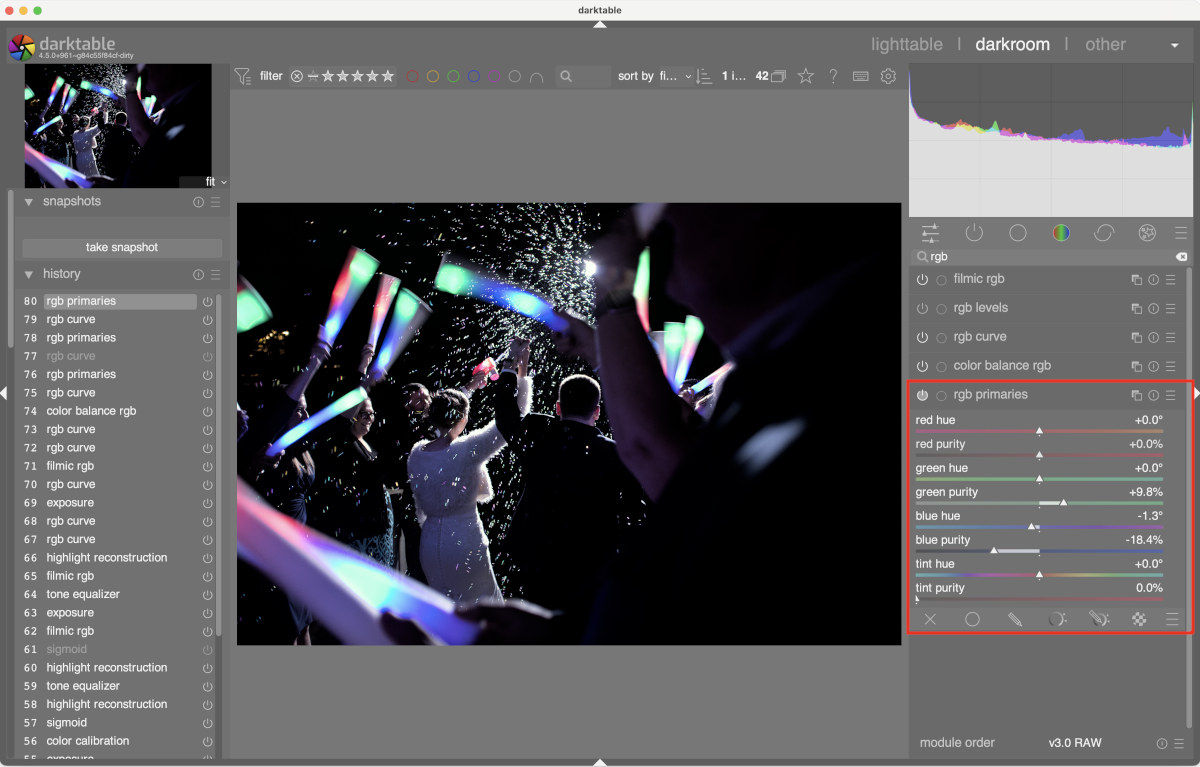

This gets the image about 90% of the way there. As a finishing step, use the rgb primaries module in the new development version of Darktable to "contaminate" the blues with some green. This new module will be available in the full 4.6 release this December. Please note that since GitHub doesn't have ARM Mac runners right now the Mac nightly build is X86_64 only. The full releases have a native ARM version and GitHub is allegedly adding ARM Mac build hosts this fall or winter. I compiled my own from from the Darktable source for Apple ARM.

After some other tweaks the image is ready for export and it's close enough to the Fuji's out of camera color treatment.

This begs the question: why not use the Fuji JPG and skip this whole business? That's a good point and if one is happy with the JPG output then that's fine. The problem comes when or if the camera does something that looks bad. Its image processor is applying a standard curve and treatment to a wide array of situations and it might not always nail it. With the RAW it's easier to adjust things in post. These days I usually shot RAW+JPG on my Fuji gear specifically because their JPG engine is just that good, but there are times when I need the malleability of the RAW. Knowing how the camera and imaging pipeline works is handy in a situations like that. Just remember that the camera JPG is not the canonical version of an image and don't get caught up in trying to represent reality exactly. Yes, the bright blue LEDs rendered by default in Darktable are closer to reality but they are ugly as sin. Hue shifting out to a softer more pastel color works well for this image. Also, trying to exactly duplicate the camera JPG is a bit of a fool's errand. It's like trying to reverse engineer a cake. It's not too difficult to get close but without an exact recipe it will never be exact.

2022 Favorite Photos

Despite 2022 being a difficult, if quiet, year for me personally and not really feeling the photography thing a whole lot I did manage to use a camera quite a bit. In no particular order here are a few photos I took that I like for one reason or the other or were noteworthy.

From June, we have synchronous fireflies around here and this way my attempt this summer in my backyard with the D850.

Also from June, some people on a rock face, Fuji X-H1.

This one isn’t very original or special but it got a lot of airtime on local and state news, the November 8th lunar eclipse, D850 this time as well.

Lastly, I have been sliding back into studio and portrait work this year but slowly. I picked up a pair of Godox LC-500Rs and have been using the RGB modes for some shots. I haven’t caught anything really super original this year on this front as it’s been slow and just sort of getting my feet back under me but I like the IRL split toning kind of look in this. Fuji X-H1 again.

Now time for some stats. This year I kept 4645 photos totalling 147GB. At least so far, I tend to do a year end cull in January so that number will likely head down. Specific camera stats below. Note this won't add up to the total due to some of those being from scanned in film, silly ancient floppy disk cameras that don't have modern metadata tags, borrowed cameras and so forth:

| Camera | Number of Photos |

|---|---|

| Nikon D850 | 1496 |

| Nikon D7500 | 573 |

| Nikon D800 | 183 |

| Nikon D1X | 53 |

| Nikon D3s | 37 |

| Fuji X-H1 | 1134 |

| Fuji X-T2 | 609 |

| Fuji x100F | 560 |

All data taken from Darktable. Look, don't judge me. I know I have a problem. It's only hording if you don't use it right?

295 photos had five stars and were edited. I only use one or five star ratings, it's either good or it's not.

My most used focal length was 23mm. I suspect that's because I use the x100f a lot and the Fuji 23mm prime often on the other two.

Age is not good proxy for technological illiteracy

Now that I'm approaching 40 in a few years the trend of equating age with technological illiteracy has really started to bug me. Most recently I've seen a few articles with respect to Congress, their advanced average age, and relying on that as a crutch to explain away their ineptitude with all things technical. It's a common trope and one that I've bought into in the past but the more experience I get the more I see it for the meme it is. Congress is bad at technology policy because of corruption, lobbies, and willful ignorance. They'd likely be very nearly this bad if the average age was 35 in my opinion.

First, I've met a fair share of Gen Z kids who I'd call incompetent with understanding the tools they use. Honestly, I'm a bit surprised that with all the screen time that kids born after the year 2000 had just how bad many of them are with basic concepts like a directory tree or just understanding what different pieces of hardware do and how they interact. Granted some of this is "standing on the shoulders of giants" in that using technology today is just a lot easier than it was 30 years ago when I was getting started. I had to know Hayes modem codes, IRQs, and TSRs. They press a button on their router for WPS or have to enter a WPA2 password in a worst case scenario. However the number of "tech geeks" in the younger generation seems rather proportional to what it was in my day despite the greater exposure to technology Gen Z has had. There are plenty of experts in Gen Z it's just not everyone under 25 years old.

Working in a university gives one exposure to a good mix of age groups as well. I know plenty of people 45 and older who know more than I do. In fact I've known a few rather tech savvy retirees who kept up with things nicely just as a hobby. There's definitely a slow down effect as one ages, I can tell you I don't pick up new languages as fast as I used to, but experience is nothing to be ignored either. Ageism is pretty rampant in the technology field overall but that's a different rant.

Technology as a subject is rather broad and deep as well. My area of expertise is generally more low level physical things. Hardware, networking, ASM, C, some Python and virtualization. For example I understand how a state machine and an ALU work but give me some higher level abstract software engineering topic and I'm not really going to follow. Likewise I've met a few brilliant machine learning experts that didn't know the difference between RAM, cache and physical storage. I don't think either of us should be called technologically illiterate just somewhat specialized. Likewise I'm not an avid smartphone user (my first gen iPhone SE still works just fine thank you) so outside of cutting most of the garbage off I don't use on it I'm really not up on that side of the world. My battery literally last days as that's how little I use it. However just because a younger person can run circles around me there doesn't mean I'm now ready to be put out to pasture or somehow technologically illiterate. Honestly, there I've just drawn a line as to where I think that tool's trade-offs not worth integrating it in my life more.

I also find large chunks of the younger generation either largely resigned to or ill-informed on the endangered species that is privacy these days and frankly that seems to be a problem at both extremes of the age spectrum. A lot of the 60+ crowd does not understand it either. I think Gen X and older Millennial are probably the most aware of what big tech and government are interested in and why it's a problem. Also, just how insidious and inside your mind social media can be. Just because a Google or Instagram and flying the flag of your pet cause does not mean they're you're friend. They just want you to hand over more that 21st century version of crude oil, data, and will tell you whatever you want to hear to get it. I don't know why, maybe it was the inherent cynicism 80s and early 90s kids grew up in but that chunk seems to have a healthy skepticism of living online overall. The point to drive home here is that you're not using these services, they're using you.

This isn't meant to be a "kids these days" rant. I also acknowledge that specific skills are different across generations. Gen Z grew up with things I did not. I just think equating younger age with greater understanding of the tools they are using is a bad move. Look at someone's credentials not their birth year before you judge them. Age is a bad proxy for "knows what they're talking about" and some of us geezers may surprise you. Likewise I know plenty of 20-somethings I respect and would call my peers. Age has little to do with it and we shouldn't overly preference one group over the other based on faulty assumptions. Ignorance knows no generational bounds and neither does curiosity.

Recent Sunrises

A few recent sunrise photos, edits in Darktabe 3.6.

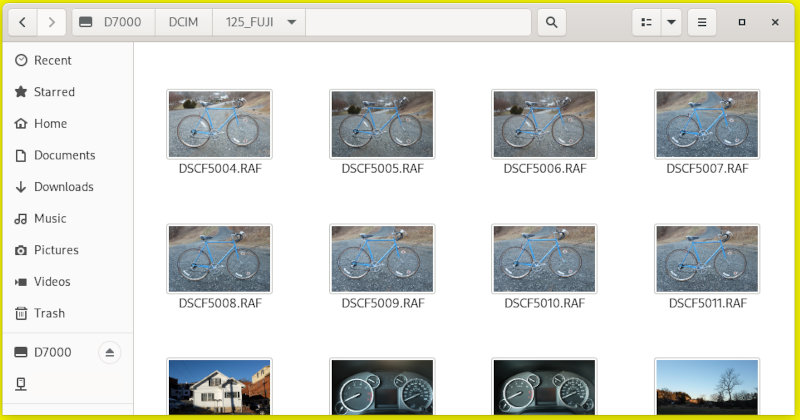

Fedora GNOME RAW Thumbnails

I've been trying to give GNOME on my desktop a fair shake the last few months as I like the way it works on my XPS 13. I've run into a few problems here and there but lacking RAW image previews in the file manager is quite the oversight in 2021. Dolphin and even Thunar do it by default in KDE Plasma and XFCE respectively. I usually run Fedora with the KDE Plasma environment on my desktop machine but the last time I tried GNOME 3 a number of years ago I seem to recall RAW thumbnails existing? Perhaps not, but here's how you fix that:

Fixing this oversight is not to terrible but does require some fiddling. First you'll need the ufraw package. Install that through the GUI Software center or through dnf:

dnf install ufraw

It'll bring a couple of dependencies with it but not too much bloat.

Next you'll need to create a file at /usr/share/thumbnailers/ufraw.thumbnailer and add the following to it:

[Thumbnailer Entry]

Exec=/usr/bin/ufraw-batch --embedded-image --out-type=png --size=%s %u --overwrite --silent --output=%o

MimeType=image/x-3fr;image/x-adobe-dng;image/x-arw;image/x-bay;image/x-canon-cr2;image/x-canon-crw;image/x-cap;image/x-cr2;image/x-crw;image/x-dcr;image/x-dcraw;image/x-dcs;image/x-dng;image/x-drf;image/x-eip;image/x-erf;image/x-fff;image/x-fuji-raf;image/x-iiq;image/x-k25;image/x-kdc;image/x-mef;image/x-minolta-mrw;image/x-mos;image/x-mrw;image/x-nef;image/x-nikon-nef;image/x-nrw;image/x-olympus-orf;image/x-orf;image/x-panasonic-raw;image/x-pef;image/x-pentax-pef;image/x-ptx;image/x-pxn;image/x-r3d;image/x-raf;image/x-raw;image/x-rw2;image/x-rwl;image/x-rwz;image/x-sigma-x3f;image/x-sony-arw;image/x-sony-sr2;image/x-sony-srf;image/x-sr2;image/x-srf;image/x-x3f;image/x-panasonic-raw2;image/x-nikon-nrw;

Close out of Nautilus and do an rm -rf ~/.cache/thumbnails/* then open a directory with RAW images. You should now see RAW photos as above. Hooray!

Granted I think Nautilus is one of GNOME's weak points, especially compared to what's available in other Linux desktop environments. Nautilus seems neglected and at times it even makes me miss the notoriously bare-bones Mac OS Finder. GNOME excels in a number of areas this makes the mediocrity of the file manager stick out. Split windows like Dolphin and more view mode options instead of just "icons" and "list" would be a good start.

RAW thumbnailing really should be in the base install of any desktop distribution using GNOME. It's in literally everything else (except Windows 10 apparently) and has been for years. If you're running GNOME you're probably looking for more of a modern desktop experience instead of a lean and slim install anyway so a few more megabytes of software and a configuration file won't make that much more difference.

Peak Linux Desktop

We are in the peak Linux desktop era and it might be downhill for a while. When I say peak Linux desktop era I mean you can pick up nearly any machine off the shelf, install Fedora, Ubuntu, Mint, Debian or Manjaro as they are and get straight to work without fiddling with the computer itself. There are some corner cases where things are more difficult but even nVidia PRIME works well these days. In my opinion the Linux desktop is basically at parity with the proprietary options in terms of "just works." You can boot the machine up, do the initial create your account and sign into your cloud stuff and boom you're done just like any other desktop OS. The image of Linux being about messing with the computer is really outdated. It's a good productivity tool, as much so as Windows or macOS in my opinion. Yes it's different and takes some adjustment to the new workflow, just as if one were to switch from Windows to Mac or vice-versa, but it's not inherently broken. No you don't have to touch the CLI for anything on the major desktop environments if you don't want to.

A lot of this is due to monumental efforts on the part of volunteers but also companies like Red Hat and Canonical. However there are two major players who have contributed huge amounts of code, time and funding to the Linux ecosystem that people seem to forget about: AMD and Intel. Intel is a major part of the reason why modern USB standards work on Linux and AMD has documented and open sourced their graphics drivers. Intel and AMD pay developers to work on this, upstream code into the kernel and have generally been decent community members. Even with all their flaws we owe them some thanks for Linux hardware support being where it is along with the fleet of volunteers maintaining things at organizations like freedesktop.org.

Late last year Apple released ARM based Macs. Microsoft has just announced a translation layer to allow X64 code to run on ARM on Windows and has shipped ARM machines for years. Many OEMs have been shipping ARM Chromebooks for a while too. The Apple announcement came with their usual showmanship and is going to make the rest of the world take notice. Like everything else Apple does the rest of the industry will be tripping over themselves to follow suite. I wouldn't want to be Intel or AMD right now or be holding their stock. ARM is likely the future for most devices outside of enthusiast desktops or legacy applications. I think that even enthusiast desktop platforms will switch over, but that's just my opinion. Yes I realize Chromebooks are technically Linux as is Android. This is more about the FreeDesktop type of future. Chromebooks and smart phones are still very locked down devices and do not support the full range of what the Linux desktop today can.

This brings us back to peak Linux desktop. Linux runs on armhf and arm64 just fine. The problem is the ARM ecosystem is a mess of cobbled together proprietary things. Even if you aren't dealing with a locked bootloader power management, boot processes, IO and graphics varies tremendously from one ARM platform to the other. Unlike X86 and X64 where UEFI, ACPI and other well documented standards are implemented across nearly the entire range. There are very few ARM vendors working on open sourcing and upstreaming support for their platforms into the Linux kernel either. Even the beloved Raspberry Pi relies on out-of-tree patches for full support. Most of the other consumer facing ARM boards out there for Linux rely on reverse engineering in some part or the other, even if just for the Mali graphics.

There is hope, a standard called ARM ServerReady mandates UEFI and ACPI for compliance. This solves the boot process and power management problem. Despite the data center centric name this standard works fine on desktop and laptops as well. Microsoft's Surface products used ARM ServerReady as does the ARM based Lenovo Yoga. Tyan and Gigabyte have been selling a range of ARM servers that fully support it as well. Indeed you can grab an arm64 Debian installer and slap it right on the Tyan and Gigabyte machines. They work great as long as you don't need graphics.

Alas, this only solves part of the problem though as you're still dealing with a lack of driver support. Mali graphics have a reverse engineered driver that has been mostly accepted into the kernel the last time I checked but like most reverse engineered things it's usable not not fully featured. A very similar feeling to the Nouveau driver for nVidia cards. Most ARM options for Linux on the desktop or mobile right now are low performance patchwork things like this.

What we need is an ARM vendor to step up like Intel and AMD have and work hard on upstreaming support into the Linux kernel for all their hardware and ServerReady to become the default ARM platform. Huawei is probably the closest on this as the Chinese tech sector is ditching western software firms in favor Deepin and Ubuntu Linux. I think a lot of the FUD about Huawei hardware is just US government sabre rattling. I've seen no proof of it and honestly these days it's pick your backdoor if you're using anything from a Five Eyes country anyway. Until someone starts working hard on upstreaming support and ServerReady becomes the defacto standard Linux on ARM is going to be a mess and will revert back to hobbyist only territory for the desktop. I've been using Linux on and off since the late '90s and early '00s. I remember the good-old-bad-old days before Intel and AMD got onboard and I don't want to go back. That's where the "4 hours and 6 kernel recompiles to get your network card to connect" meme came from. I've got other things to get done now and cannot spend that kind of time minding my machine.

Don't get me wrong, I don't think the Linux desktop is going anywhere. But I think the mid-future is more like the Raspberry Pi or PineBook Pro and less like a ThinkPad or XPS with Fedora, Mint or Ubuntu on it. It will be a tool for people to create one off projects on, not the robust desktop we have today. There may be a open, performant, upstreamed and widely available ARM platform for Linux in the future but I think in the meantime were in for a decade of pain. Again, I could be wrong, that happened once before in the 80s.

CentOS, Fedora and Trust

Last week Red Hat made an earth-shaking announcement and ended CentOS 8 almost nine years ahead of schedule. Understandingly this caused quite the stir in the Linux community. CentOS started off life as a community rebuild of RHEL as Red Hat released their source to comply with the GPL. It existed independent of Red Hat until 2014 and was understandably quite popular. There were several community rebuilds of RHEL back then but CentOS was the survivor, Scientific Linux was the other major player that folded up shop last year. Even if you paid for RHEL you probably used CentOS in testing or development environments. Many RHEL admins trained and learned on CentOS too. I remember when Red Hat acquired the CentOS project in 2014 there was a lot of hand wringing over what this meant as CentOS cut directly into Red Hat's bottom line. Once again these community worries came back up in 2018 when IBM acquired Red Hat. Although it took six years it seems many of the doubters were proven correct last week.

I'm not a huge user of RHEL or CentOS user myself. We use RHEL and CentOS in the office in some places we are required to but for the most part my infrastructure pieces are Debian. Among many other things I like the governance and structure of the Debian project much more along with the "universal operating system" approach they take. I've run Debian as a desktop as well and it works just fine in that situation too and has become easier to do with backports and testing actually becoming usable and getting more timely security patches. Debian stable is probably my favorite server operating system to work with. So, the direct impacts of CentOS being taken out back and shot are minimal on my day-to-day life. However, since 2016 or so I've been using Fedora as my desktop operating system. I'm not a fan of Ubuntu for a few reasons and Fedora had what I thought was pretty sane defaults, handled the HiDPi screen on my laptop better and flat out looked the best. I mostly ran the KDE Plasma spin at the time but over the last 8-12 months or so I've even adopted GNOME on my laptop. Fedora is the project that CentOS and RHEL are ultimately derived from and Red Hat/IBM has a controlling stake in the Fedora Project. I'm also a pretty big Ansible user and have been since before they were bought out by Red Hat.

This is where I become concerned. Two of the major projects I use on a daily basis, Fedora and Ansible, are owned and controlled by a company that just threw the on part of the Linux community under the bus. Yeah, I understand that they have a profit center to protect and CentOS was directly impacting that. Part of it was certainly bad communication before hand that CentOS was a "best effort" product and not really guaranteed, but cutting CentOS 8 off in 2021 when many people were counting on it until 2029 is brutal, no two ways about it.

Right now Fedora is likely safe as is the free version of Ansible. I can't say I'm entirely comfortable with Red Hat having such a large stake in Fedora now. Fedora makes a big deal about being community driven but Red Hat still has a controlling stake in the project and puts up a lot of infrastructure for it. On paper Fedora is independent but in practice it's entirely reliant on Red Hat and Red Hat has a substantial driving force in the project. What does this mean going forward? Will Red Hat or IBM start wanting telemetry or some other dubious thing implemented in Fedora? I'm not saying they will but I think a lot of free software users and fans would feel a lot better with a more independent Fedora. Even if it means they need to have NPR or PBS style pledge drives every year to pay for infrastructure I think it would be preferable to having so much reliance on Red Hat and IBM. I'd gladly chuck some money at a Fedora Foundation if it meant they could tell IBM to pound sand if the community thought something from corporate was a bad move.

Red Hat likes to crow a lot about open source and community but this move has burned a lot of bridges. In the short term I suspect they'll net a few more RHEL subscriptions from those who can afford to convert over. But the memory of the community is long and this move may burn them in the long term, trust isn't something that's earned easily as most of us are here because we don't trust closed software vendors like Apple and Microsoft. Right now I've switched back to Debian on my laptop and I may move my workstation back to it in the coming weeks but we will see. For now it's still on Fedora 33.

Debian isn't perfect but it's at least free from a lot of corporate meddling and is a more truly community driven project. I mean, they even have package on anarchism in their distro.

I don't mean this as a knock against the fine folks at the Fedora Project. It's a great piece of software and I still say it's the best "get it installed and get to work" type Linux out there. I still highly recommend it to anyone looking to start out in Linux, Debian isn't nearly as easy to get started with. Fedora also doesn't shove proprietary package managers in behind your back like Ubuntu. I really care about the direction of and enjoy the Fedora Project as a whole. I just don't trust the organization paying your power bills at the moment.

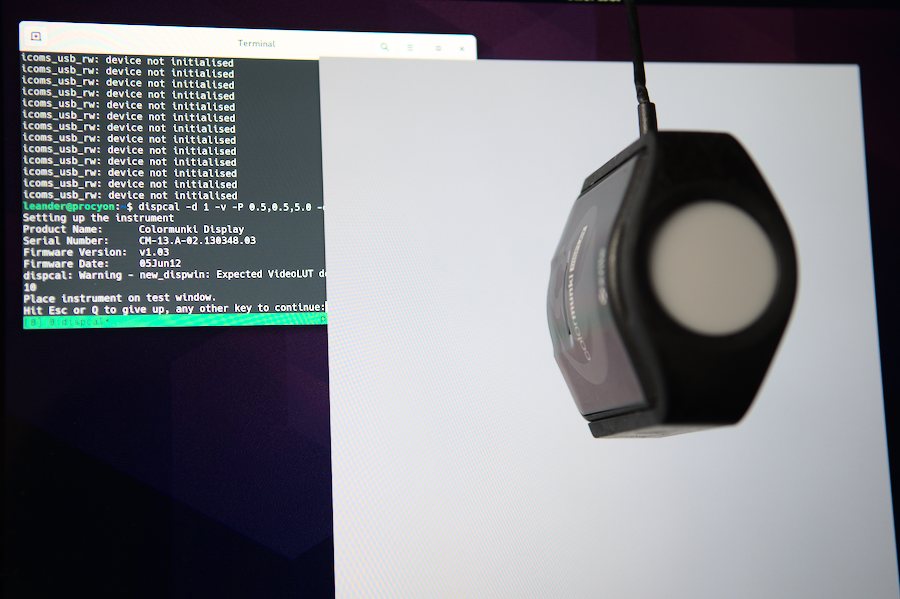

ArgyllCMS Display Calibration

Most photo and graphics people are probably familiar with DisplayCal as a monitor calibration tool. It's more thorough than the software that ships with Xrite devices, even if you're using one of their supported platforms like macOS or Windows. Unfortunately DisplayCal's GUI relies on Python 2 which is end-of-life and is being removed from a number of Linux distributions. Fortunately the GUI is just a front end for Argyll CMS and it is quite easy to calibrate a monitor with just Argyll installed. The command is as follows:

dispcal -d 1 -v -P 0.5,0.5,3.0 -o 9300_Internal_Display

The options are as follows:

-d 1: number of your display, if you have multiple displays this will be 1,2,3.. etc. Just running dispcal with no options will show what displays get what number. If you have just one display just do -d 1.

-v: verbose output

-P: screen posistion and scale, useful for HiDPI displays, options are X-position, Y-postion, scale. Here I have it in the middle of the screen (0.5,0.5) at 3.0X scale.

-o: Output file to save the profile to.

If you don't have a HiPDI, also called a retina display by Apple, the -P option might not be needed. I use it because without scaling the color square displaycal creates it is too small to cover the colorimeter. The software gives you the option to make some display adjustments before calibrating. If you're on a laptop or another display without RGB adjustments you can just press 7 to just straight to calibration and let dispcal do its thing.

Once the ICC profile is created you can import it into your desktop environment's color correction tool and apply it to the correct display.

Micca OriGain AD250

I recently upgraded my desktop audio, for about the last fifteen years I used a 5.1 setup with a Logitech Z-5500 which have been OK. Nowadays I do more listening to music than anything else where stereo is more important than surround, not to mention the reclaimed desk real estate. I have a set of Sony bookshelf speakers laying around in storage so I went looking for a desktop amp. My requirements were an optical input with USB or analog inputs for secondary devices. Bluetooth wasn't a requirement as I can use PulseAudio on Linux to turn my desktop or laptop into a Bluetooth receiver but it would have been nice to have built in. I'm also a stickler for physical buttons and switches for switching inputs.

The Micca OriGain AD250 was still available from the usual online places even after stocks of other amps dried up due to COVID related importation problems. My other options were a couple of models of SMSL amps but I honestly like the looks of the Micca better. It's 50W on each channel which is more than enough for a desktop setup and has very simple forward facing controls.

My desktop machines have had optical out for years and I always use it. It's a nice way to bypass the onboard sound card that is usually mediocre at best and may have proprietary features or codecs that do not work fully in Linux. Optical is becoming far less common on laptops though so I wanted something with wither USB or analog in for times when I need it. Plus I still have an iPod or two laying around.

The AD250 is a compact and good looking box. I like having a physical volume control and the switches for changing inputs are nice and great to use. The power brick is a bit large and you'll want to relocated it off the desk.

Overall it sounds good and the output is well suited for a desktop type scenario. I wouldn't expect this to fill a large room.

The major downside has been the popping when using the optical input. It's like a power on pop and I thought it had to do with the power saving functionality on modern motherboards powering down the LED on the optical output. It's pretty easy to disable this feature in Linux:

/etc/modprobe.d/snd_hda_intel.conf

options snd_hda_intel power_save=0 power_save_controller=N

That should handle it on most onboard sound cards. It still happens less frequently and it may be either a power supply issue on the Micca or the circuit design around the amp. The fact that it doesn't do it on the analog input makes me think it's the Micca's power supply or perhaps the amp lacks a delay circuit. There's a slight chance it's in my Sony SS-B1000 speakers as they are 8 Ohm and that's right at the upper end of what the AD250 will drive. I've tried numerous optical cables and no dice, still pops. I’ve noticed it some one the USB input as well, just not as bad. Unless I can get the popping solved that may end up being a deal breaker for my use. Maybe a revision two of this amp will fix the optical port. I've read some comments from elsewhere on the internet that the optical input noise is not isolated to just my amp so it seems to be either a power supply issue, filter issue or some other design problem with the AD250.

It’s most annoying when you’re scrolling through a web page with many auto playing media sources, for whatever reason even if you have the audio muted each video clip that starts up causes the amp to wake up and pop

Overall I like the look, feel, feature set and user interface of the amp but for whats a $100 desktop amplifier I find the popping to be annoying. From what I came across in some internet searches the popping is not a unique problem to my copy of the amp either so there seems to be some quality control issue with these or some engineering or component issue. I do use a splitter on my desktop's optical output as I also send it out to an OriGen G2 for my headphones and neither of these devices seem to like the active splitter I have. Once the machine powers on they will just blast static at you if the optical input is still selected. I switched to a passive splitter and they behave much better. For now I plan on hanging on to the AD250 and seeing if I can figure out what the deal is with the popping. I have a 2015 MacBook Pro with an optical output as well that I may drag out of the pile to see if it pops with that machine. Could still be some driver or power save feature on my desktop causing it. The old Logitech system didn't have these problems with either machine.

For now I can say if you're using the USB input or the analog input this is a great little desktop amp with an awesome user interface. Just flip the switch to your input and turn the knob on for volume. No remote, no digital display to break just simple, classic and solid which suites my tastes quite well. It drives reasonably sized bookshelf or desktop speakers just fine and sounds very detailed I think. If you're a basshead it's probably not the amp for you. The optical input is still suspect though in my opinion but chances are most people aren't using those in 2020 so that may not be a deal breaker for you. Since I'm using the USB input on my docked laptop and the optical on my desktop I really need both digital inputs though.