Winter

Just a couple of shots from last week's snow storm.

Fujifilm X100s processed in Darktable. First real snow of the 2017-2018 winter at a few inches here in downtown Boone, NC.

RTL-SDR NOAA Weather Radio Streamer in Linux

If you’re into amateur radio you’ve probably heard of these cheap DVB-T tuner dongles re-purposed as software defined radios. They’re very popular for building scanners and streaming setups. I’ve got a couple of models that I use with Gqrx for listening to traffic on the local repeaters, weather radio and a few other things.

This weekend I finally had enough time to sit down and setup rtl_fm and try out a streaming solution for listening to NOAA weather broadcasts. I’ve streamed scanner and weather radio traffic using Icecast before but that was with a external radios and a mic input. This time I wanted to use a machine with no sound card (my home server machine). All of this was done on Debian Linux 8, to get started we need three pieces of software:

rtl-sdr ezstream icecast2

All are available in the default repositories so just install them with apt.

Next I had to configure icecast2, the most basic configuration should work but at least change your admin and source password in the authentication block:

<authentication>

<!-- Sources log in with username 'source' -->

<source-password>password123</source-password>

<!-- Relays log in username 'relay' -->

<relay-password>password123</relay-password>

<!-- Admin logs in with the username given below -->

<admin-user>admin</admin-user>

<admin-password>password123</admin-password>

</authentication>

That should at least allow you to connect and get up and going. There are other options to secure and/or tweak but I’m not going to cover those here.

I used my NooElec Nano SDR as a test source. The rtl-sdr package comes with a program to handle FM tuning called rtl_fm. There are a few options to tinker with here but only too options are critical for operation here:

rtl_fm -f 162.500m -M fm -

-f 162.500m: sets the tuner frequency to 162.500Mhz

-M fm: tells the tuner to use standard narrow FM tuning, if you want to listen to commercial radio you’d use wbfm or wideband FM.

-: directs output to stdin

Send that output to lame to encoding:

lame -r -s 24 -m m -b 64 --cbr - -

-r: assume raw pcm input

-s 24: set the sample rate to 24K

-m m: mono mode, NOAA doesn’t broadcast in stereo

-b 64: set bitrate to 64kbps, it’s more than enough for this

--cbr: constant bitrate

- -: stdin/stdout

A quick note here, you’re going to have to mess with the sample rate in LAME to get things sounding right most likely. I arrived at 24K, much higher or lower and the pitch is off. It may not work with your model SDR, etc.

Lastly ezstream needs configuring. It took a while to get a working MP3 configuration sorted out but I eventually arrived here:

<ezstream>

<url>http://localhost:8000/WNG588</url>

<sourcepassword>password123</sourcepassword>

<format>MP3</format>

<filename>stdin</filename>

<!--

Important:

For streaming from standard input, the default for continuous streaming

is bad. Set <stream_once /> to 1 here to prevent ezstream from spinning

endlessly when the input stream stops:

-->

<stream_once>1</stream_once>

<!--

The following settings are used to describe your stream to the server.

It's up to you to make sure that the bitrate/quality/samplerate/channels

information matches up with your input stream files.

-->

<svrinfoname>WNG from Mt Jefferson, NC</svrinfoname>

<svrinfourl>https://hubble.buttonhost.net</svrinfourl>

<svrinfogenre>Public Information</svrinfogenre>

<svrinfodescription>NOAA Weather Radio from Mt Jefferson NC</svrinfodescription>

<svrinfobitrate>64</svrinfobitrate>

<svrinfochannels>1</svrinfochannels>

<svrinfosamplerate>44100</svrinfosamplerate>

<!-- Allow the server to advertise the stream on a public YP directory: -->

<svrinfopublic>0</svrinfopublic>

</ezstream>

Save this to /etc/ezstream using your favorite text editor and pass it to eztream thusly:

ezstream -c /etc/ezstream.xml

The whole thing piped together looks like this:

rtl_fm -f 162.500m -M fm - | lame -r -s 24 -m m -b 64 --cbr - - | ezstream -c /etc/ezstream.xml

I just stuck that whole string into a shell script. If you want it to start at boot time you can shove it into /etc/rc.local for a quick and dirty solution.

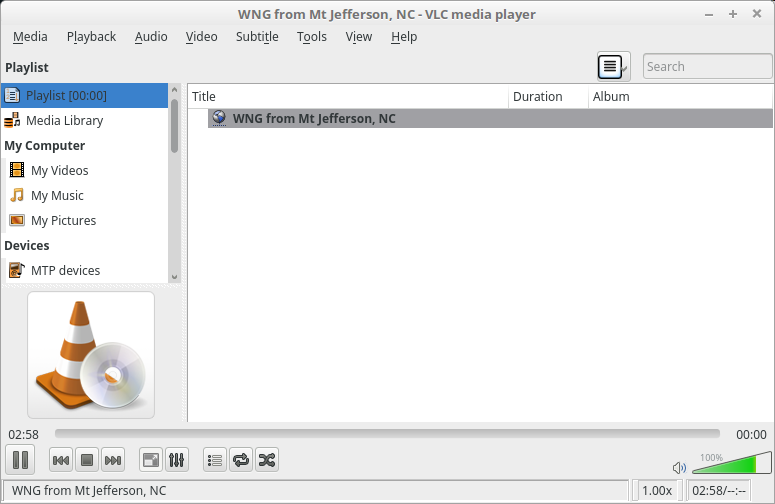

Once all that is done it’s a simple matter of navigating to your Icecast server at http://whatever_url_u_have.com:8000 and clicking the m3u icon by the stream listed there. Open that file in whatever music player you want and enjoy. I use VLC, Rhythmbox or iTunes (when I find myself on a Mac) myself. Otherwise you can just check the weather app on your phone like a normal person. Next up I want to work on getting frequency scanning working so I can get the scanner back online.

Oh and you can check out the fruits of my work here: http://hubble.buttonhost.net:8000/WNG588.m3u

Windows 10 is pushy

Windows 10 is pushy and aggravating. So glad I only really have it in a VM for a couple of things. Thanks multi-core CPUs and VirtualBox!

"Would you like to make Firefox your default browser?"

-> Clicks yes

-> Doesn't actually make Firefox your default browser, but sends you to a control panel to do so.

Me: "OK, I'll just change it here" Clicks button to change it to Firefox

Windows 10 pops up an alert: "Whoa, wait a a minute there partner. Did you know Edge is the best thing since sliced bread? What sort of intellectual deficiencies do you have that makes you want to use something else? Are you sure that you really want to do this? I hear Hitler used Firefox."

Me: "Wut, OK this is getting silly. Just do it already!"

That took about five more steps than it should have. Linux and OS X (errr macOS) have their moments and problems but I don't know why Windows users put up with stuff like this. That last alert was obviously exaggerated for humor but it did indeed plead with me not to change away from Edge.

A Brief History of Things that Haven't Been ET

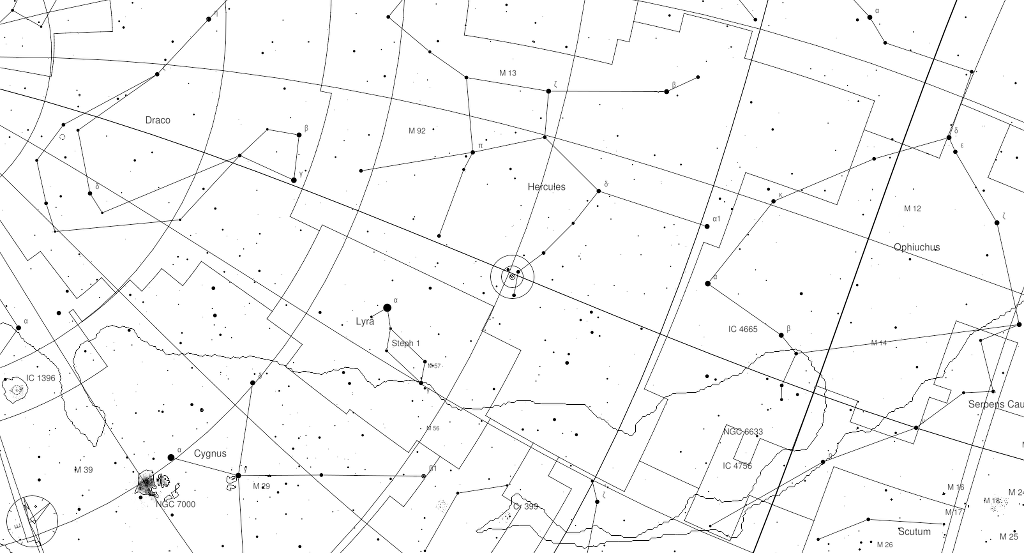

Position of HD 164595 in the constellation Hercules

With apologies to Dr Stephen Hawking for the title.

The media is running amuck with stories of an ET signal from HD 164595. It's an intriguing concept as HD 164595 is rather close to our sun on the main sequence with at least one known planet. However this outburst is unlikely to be from another civilization for a number of reasons.

First, let's look at few more promising ET candidates that turned out to be more regular natural phenomena. The most famous is probably Percival Lowell's martian canals. In the early 20th century photography was still in it's infancy so many astronomers still did visual observing. This combined with the human ability to insert patterns where none exists led Lowell to publish his findings as a civilization building canals. Not to fault him entirely as Mars does actually have a dynamic and constantly moving landscape but it's due to seasonal dust storms, not martians irrigating their crops.

The next promising candidate was the discovery of pulsars in the 60s. The first pulsar was found to pulse at 1.33 second intervals in the radio part of the spectrum. Antony Hewish and Jocelyn Burnell ruled out human made interference and jokingly named the radio source LGM-1 for "little green men." They didn't actually think the radio signal was from some far off alien intelligence, the name was just a joke. However popular media at the time certainly ran with that idea. Later, after more of these regular pulsing radio sources were discovered it was surmised they were rapidly rotating neutron stars. You know, just the corpses of long dead massive stars. Nothing terribly exciting there at all. Sarcasm heavily implied here in case you didn't pick up on that.

One of the more recent "it might be aliens" discoveries were so called perytons. They were only detected by the radio telescope at Parkes and only there since 1998. All sorts of theories were tested and after other radio telescoped failed to detect the signal and astronomical sources were excluded the team started to focus in on local phenomena. It turned out to be the observatory's microwave oven, I kid you not.

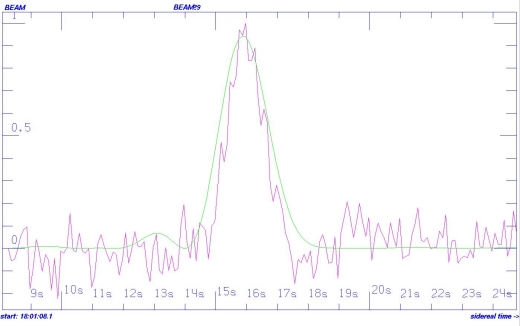

So with the colorful history of discoveries that might have been ET let's look at the HD 164595 signal. It's a strong microwave spike. So strong infact some have theorized that it could only be produced by a civilization that could harness the entire output of their parent star. It's rather unlikely that something capable of such engineering would still be using microwave transmission for communication. The signal also also isn't spectrally narrow like one would suspect an intentional transmission to be. There's also the fact that it doesn't particularly look like something you'd expect intelligent beings to be sending out. No obvious patterns. The argument could be made for it to be encrypted or encoded but if you're trying to get a neighboring star system's attention you probably want them to be able to understand it. Sure, it could have not been intended for intercept but why else would ET's be blasting out huge microwave bursts? An alien intelligence might use some mathematically significant signal or something else obviously artificially generated as a beacon to stand out against the noise. Random spectrally wide microwave bursts don't really do that. In fact, I'd say pulsars would have been a better candidate for SETI than this signal back in the day as their signals seemed to tick more boxes on the "might be ET" checklist. Sure, HD 164595 could be another intelligent life form trying to communicate with us but that requires extraordinary evidence. The signal could just as easily be Carl microwaving his burrito again.

The suspect signal from the paper

Simplest explanation is that it's some natural phenomena we don't fully understand. We have observed exactly one star up close and personally and have in depth experience with one solar system. There is a lot we don't quite understand yet. This isn't some smoking gun for aliens as the media is screaming about. It certainly warrants further study though.

The Fuji x100 Series

Gear doesn't matter, until it does.

I've been slow to move to a mirrorless system. Part of the reason is my current DSLRs work more than well enough for my needs, part was lack of system maturity in the mirrorless world and the last part was lack of resources to go throwing a bunch of cash at a new system. Personally I'm still unsure if a mirrorless system can completely replace my DSLR at the moment. I do a lot of astrophotography and the lens selection combined with the high ISO performance in the Nikon world is critical. I haven't seen anything that comes close to some of the fast wide glass Nikon makes yet. Sony is getting there but they've been shooting out a new A7 body every six minutes it seems. I guess you could call that "spray and pray." While their cameras are technical marvels the few I've laid hands on were a bit clunky to use. I also don't see the size and weight benefit on full-frame mirrorless system over a DSLR. I'm certainly not knocking the image quality that Sony has achieved that's for sure. But I don't see the benefit of dumping a Nikon system I'm already invested in, taking a bath on the gear, sinking about $4,000+ into bodies and lenses for what amounts to a marginal weight savings and lost access to Nikon's accessories and service. This is doubly true when you compare the A7's images to Fuji's or even Sony's own A6000 series. Outside of the need for extremely high res files I don't see a compelling reason to drop the coin an a full-frame mirrorless in general unless you don't already have a full frame DSLR. Crop cameras make a more compelling argument to me in the mirrorless form factor. You can get actual weight and space savings and killer image quality. Again Sony's own A6000 series illustrates that. As an aside I haven't tested an A6300 but it has certainly piqued my interest.

Fuji is the other major player in the mirrorless world. They have the hipster look down with their retro styling, which I wasn't a fan of at first (more on that later) and not as varied of a lens library as Sony right now too. Thus enters the X100s. "Wait," you might say "isn't the X100t the new hotness, why are you writing about last year's model, senility finally kick in?" Well, remember that last reason why I haven't jumped in to a mirrorless system? Turns out life is expensive and photography has been on the back burner for a while. I haven't really earning income from photography over the last couple of years either so my gear isn't paying for itself. That being said I think on the whole I think digital cameras have been more than good enough since 2009 or so. There aren't a lot of reasons to upgrade with every generation of camera like ten or fifteen years ago. I still use a D7000 as one of my secondary bodies and it's never held me back. Picking up an older model can save some cash and still net you outstanding images.

So, what was I going on about? Oh yeah the X100s. I'm not going to bother you with the technical details of the camera too much. 16MP, 24mm (~35mm on FX) f/2 lens, some video modes, film presets, yadda yadda. More detailed specs can be found all over the web. I'm not sure what I was expecting but I will say I've underestimated on a quite a few points this camera. First of all was the styling. I like functional designs which the X100 series has covered but the retro look rubbed me the wrong way. In a lot of the same ways that the PT Cruiser the HHR make me roll my eyes. But after a few days using the camera in public I saw the clear advantage of having a camera that didn't look big nor digital.

Usually I spend about thirty to forty five minutes on a walk around town a few times a week. I get free exercise and it's been an opportunity to use my cameras. I've done this in the past but even with just a 50mm prime on the D800 I had issues with people getting nervous, giving me dirty looks and generally not being friendly. Because what in the world could you be photographing in downtown besides women and children for nefarious purposes? Seriously there are people in about one out of ten of the shots I take on the street. You folks aren't that interesting, chill out. To be fair I probably look the part (kinda fat, white, bald, male) of your stereotypical weirdo. Mix in an awkward personality and you've a recipe for getting to know your local security personnel or police. It only made my anxiety worse. For the last few years I've tried to just work around it but ultimately just quit taking the camera with me. However, once I took the Fuji out things changed almost instantly. I was no longer that weirdo with that big Pervtron 800 trying to take creepshots but that cool guy with the old camera. Ok, I've never been cool in my life so maybe that's a stretch but people actually asked me about the camera and were interested in my work. It was really kind of strange out at first. But now I enjoy my little photowalks again. So, if you do any sort of travel, street or general in public type photography the retro styling serves a purpose.

Very clever Fuji, very clever indeed.

Which brings me to the handling. Usually me and small cameras don't mix. I have sort of large hands and even small consumer DSLRs give me grief. I was expecting the X100s to be a bit more cramped and harder to operate and easier to drop. Again, I was pleasantly surprised. It's well balanced and is remarkably easy to use by feel. I'm still getting used to the whole rangefinder thing but even that's not as steep of a learning curve as I was expecting. The aperture ring and metal dials are comfortable enough, although the rubberized dials on my Nikons are a bit easier on the fingers. A few more programmable function keys would also be nice. I set the Fn to control the built in ND filter but I'd like another for ISO instead of having to duck out to the Q menu. Really that's more of a nitpick though.

On a kind-of-sort-of related note, if you're like me and do any sort of outdoor portraiture in broad daylight the X100s is going to become your new best fried. It's about the cheapest thing on the market with a leaf shutter which means it can sync with a flash to its max shutter speed at a given aperture. I've been using it with some cheap YN560s and a Youngnuo remote. f/2 at 1/1000" is awfully nice, f/2.8 and 1/2000"? Even nicer! I can leave my ND filters at home or forget trying to use a McNally Tree in high speed sync mode with my Nikons. My own complaint is that in dark scenes the AF assist light doesn't come as often as it needs to. If you're using strobes without modeling lights or ones with weak modeling lights this is going to be a problem when you're trying to knock down the ambient light in an exposure. It's also more than capable in the studio. So yes, I've been using it for some actual work.

A few reviewers like the JPEG output of this camera and while it's not bad the defaults are a bit contrasty for my liking. The standard film preset tends a bit warm too, overall I like the Astia Soft setting the best. The RAWs on the other hand have an amazing amount of range. I'd put the X100s next to my D800 any day in terms of dynamic range. Blown highlights recover pretty nicely and the shadows are low noise. The lack of a filter on the X-trans sensor makes it amazingly sharp too, the lens isn't bad either. I could make some major prints off this camera with zero problems. Darktable will sometimes struggle with the RAW files, especially if the dynamic range setting is set on auto, but making some adjustments in the Shadows and Highlights module usually clears that up.

It's not all smooth sailing though. The battery life is less than spectacular, even with a brand new battery. The power meter is particularly useless. It goes from full battery to flashing red and powering off rather quickly. The "only down one bar" stage lasts about ten minutes. Get a spare or two since they're cheap. The third party batteries work well. I've heard some people complain about the performance and interface. I can't note anything in particular as I left it in high performance mode and there it seems to handle about as well as my D800/50mm combo. Other Nikon lenses seem faster and more responsive but they're bigger too. Autofocus speed isn't bad but don't expect to shoot anything terribly fast with it. But the X100s wasn't really designed with that in mind. The menu system isn't anything to write home about but it's more than serviceable. I've seen worse that's for sure. Once you find where all the settings are it's quick to move through. The shutter speed knob is hard to get to when you have a remote flash trigger in the hot shoe. I've been doing some video things here and there lately and I can already see I won't be using the X100s for much of that. Very rudimentary abilities. Longer clip length and some more manual video controls would be nice. The LCD screen could use some improvement. It's almost overly bright and has a lot of contrast so everything looks washed out, it's sometimes good for judging composition and that's it. The lens isn't the sharpest f/2 I've ever seen either and there are times I wish it was a bit faster. But I say that second thing about every lens I've used.

Which brings me to my final point. When I first started using the Fuji I called it the Python of cameras. No, this doesn't mean it's a big snake that squeezes its food to death. It's a reference to an XKCD comic about a programming language. The X100 series, for all its faults, excels at one thing: getting out of the way. Once the camera is dialed in it just sort of goes. It's inconspicuous nature means you can have it on you most of the time and no one notices. This is where the opening line of this post comes into play gear doesn't matter, until it does. The X100s has literally, almost single-handedly, gotten me excited about photography again. It's not going to replace my Nikon kit any time soon but it has taken over more than I thought it would. The X100s is the Miata or Lotus of cameras. It's not going to win any speed or power benchmarks but it's nimble and has an appeal all its own. Bros will still roll past you in their Mustang, GT-R, RS or other higher powered sports car and try to yell at you about power or torque curves but at the end of the day you're the one still smiling. It's "simplify, then add lightness" in a camera format.

While I still don't think a replacing my Nikon system with mirrorless is in the cards soon the Fuji X-series just jumped to the top of my list. Even then I'll probably keep the Nikon FX kit around and get use the Fuji stuff in lieu of buying more anymore Nikon crop sensor gear. I've shot literally hundreds of photos with the X100s over the last couple of months, more than the last half of 2015 total and I can't see myself putting the Fuji down anytime soon.

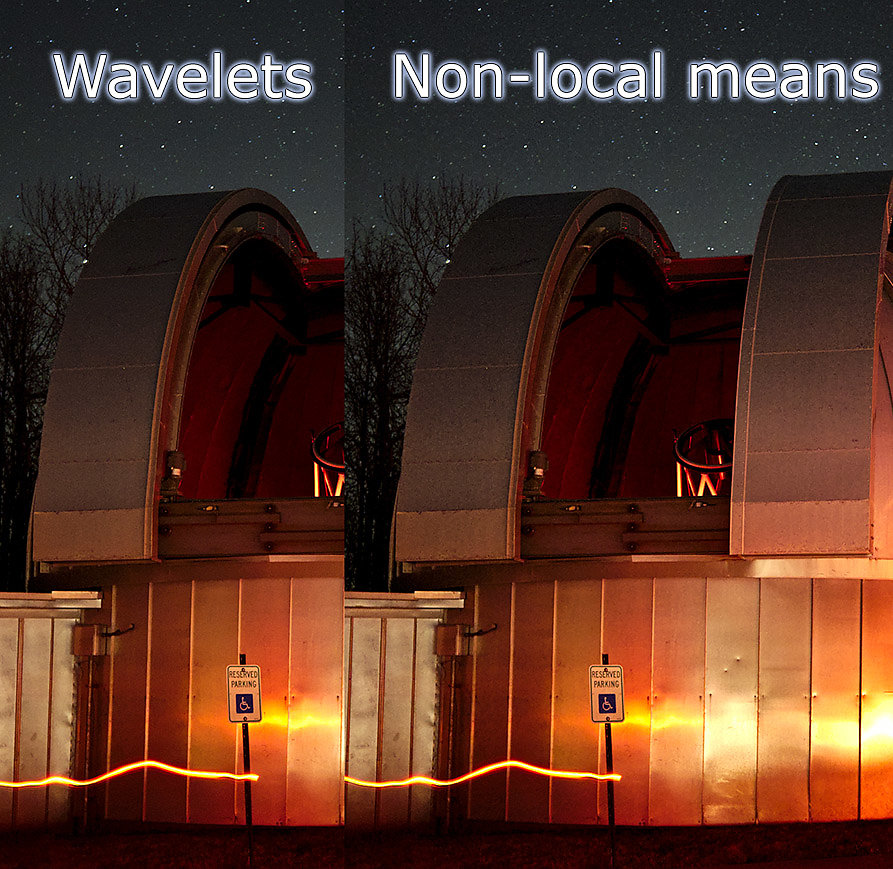

Update: Darktable Noise Reduction

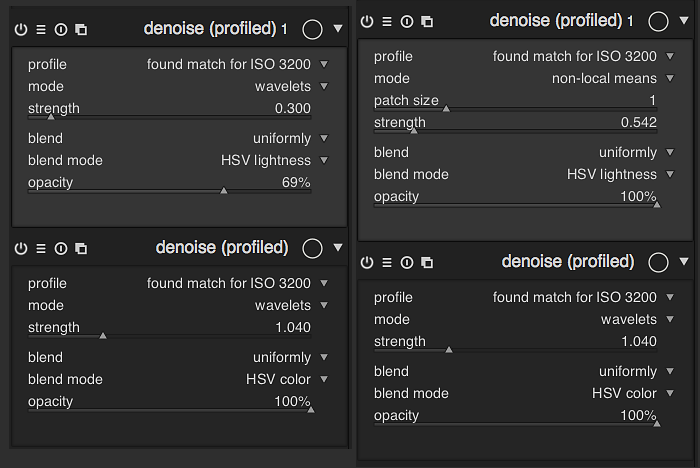

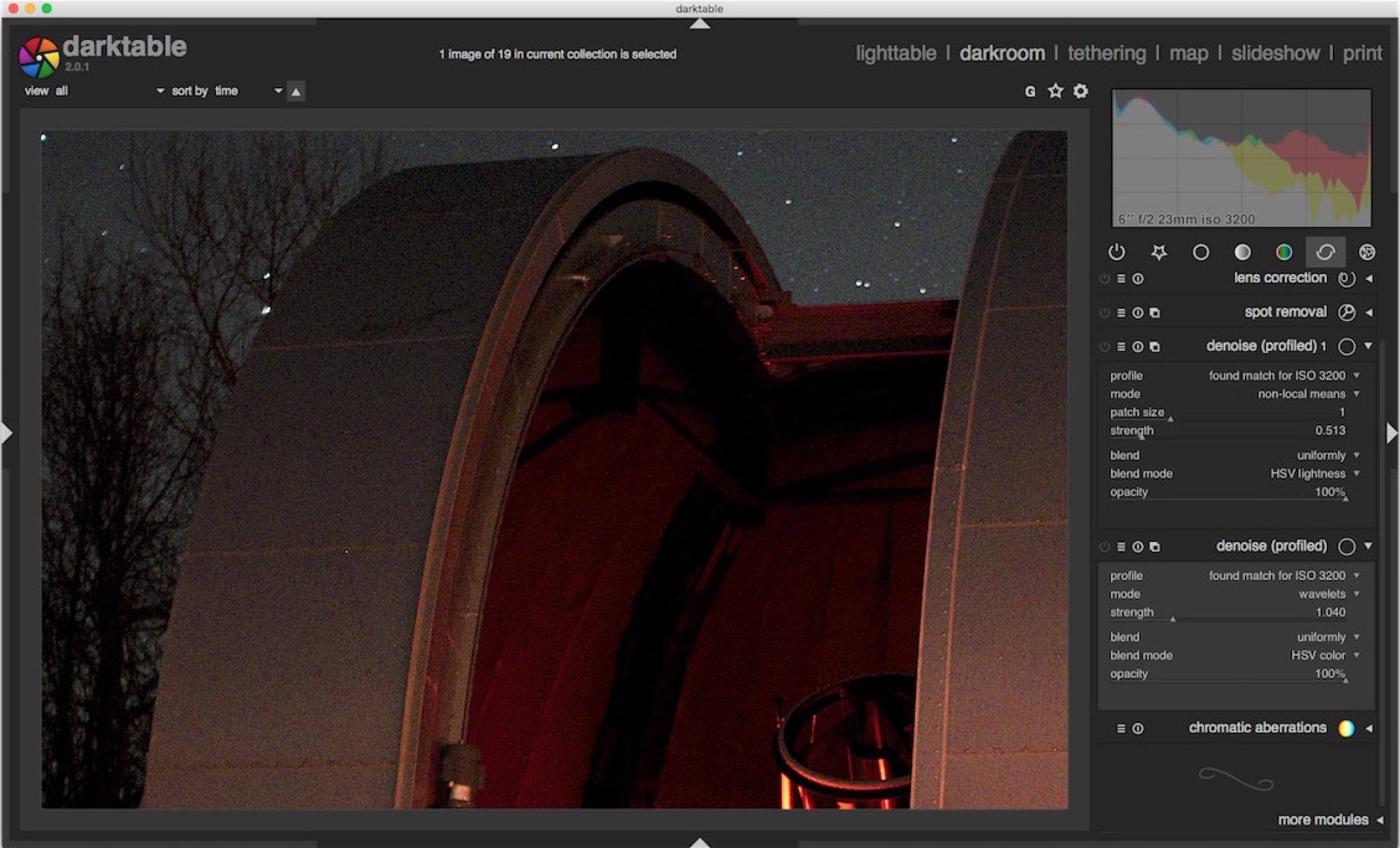

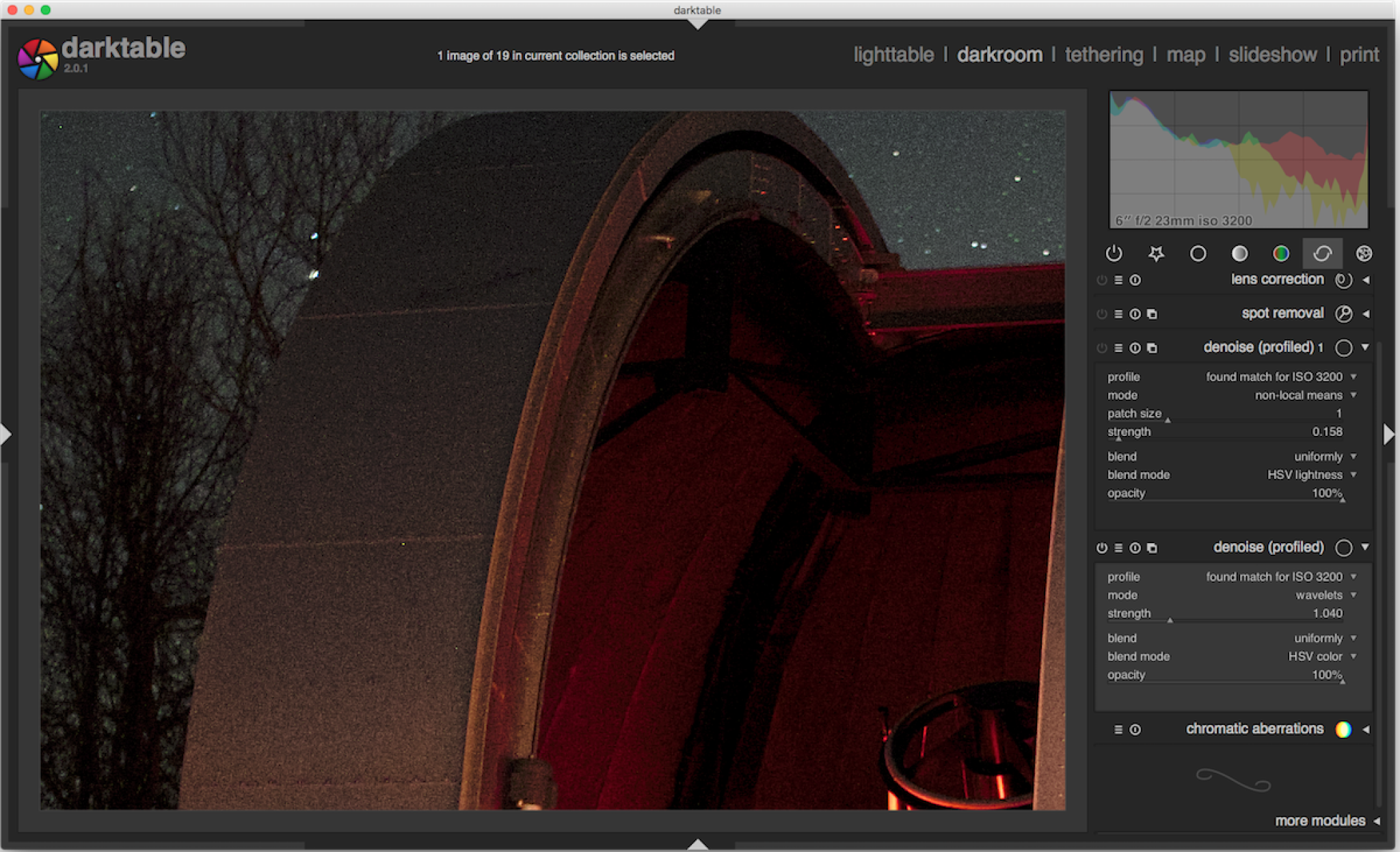

After the last post comparing Darktable and Lightroom in terms of noise reduction I went back and did more testing. I'm not sure why I never really tried the setting the HSV lightness mask to the wavelets mode before but I gave it a try and managed what I consider better results for this shot. Look for yourself below.

Wavelets VS Non-local Means

Wavelets VS Non-local Means

Settings for Wavelets on Left, Right for NLM

Settings for Wavelets on Left, Right for NLM

Just for comparison here is the Lightroom 5 output.

Adjusting the transparency on the HSV Lightness mask down a bit helped with the ugly pattern and sharpened things up a bit too.

This just goes to show that it pays to try new things. Having this many options available is a bit of a double edge sword. If one chooses to really get in there and tweak things Darktable can produce exemplary images. However, this comes at a steeper learning curve and hit in editing time. Lightroom and ACR in general does a lot behind the scenes where as I'm finding Darktable to me more of a chose your own adventure deal. There's no such things as free lunch.

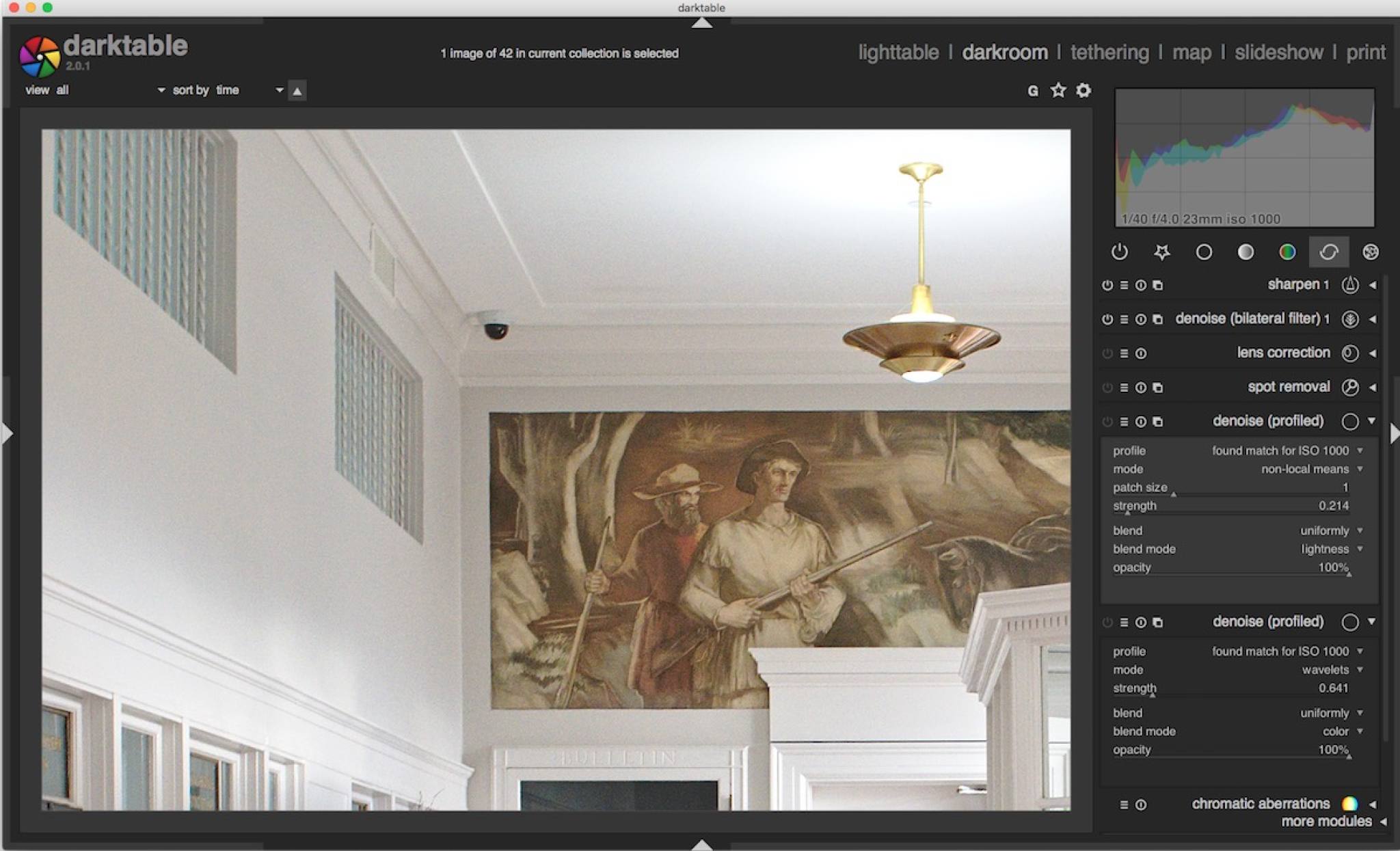

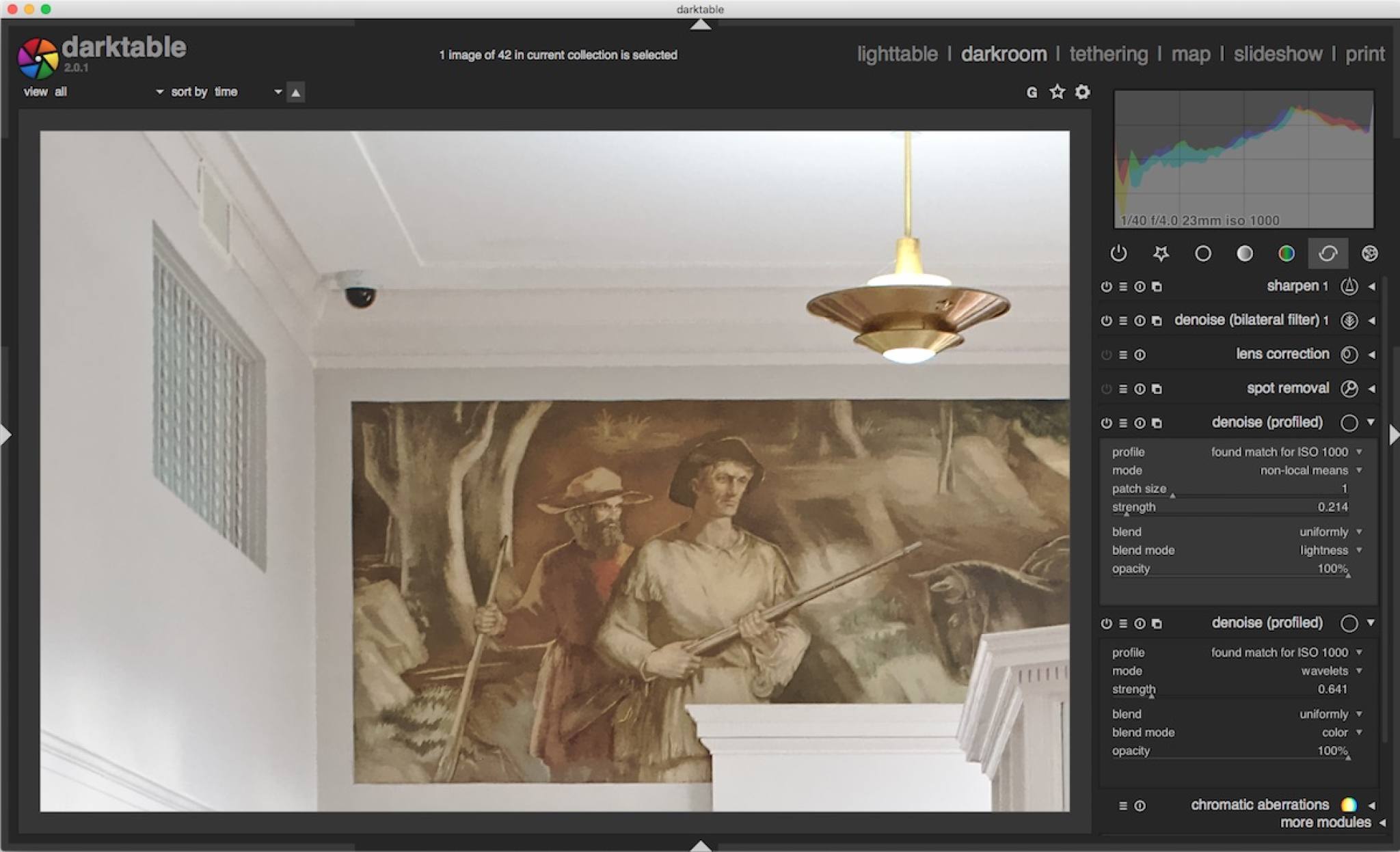

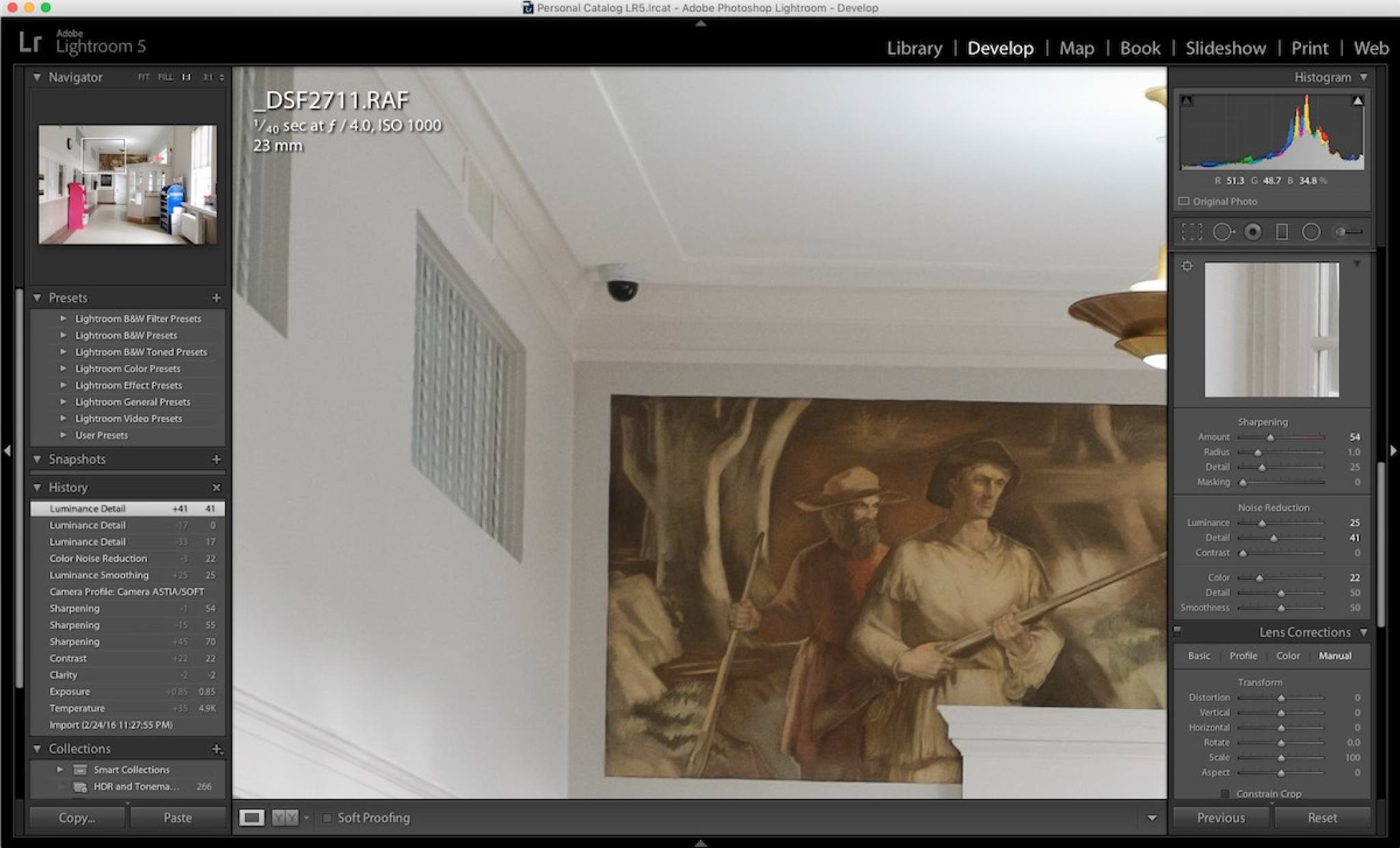

Pixel Peeping: Lightroom vs Darktable Noise Reduction

Quite a few of my shots are done at ISO 1600 and above so noise reduction in post processing is something I pay attention to. I've been using Darktable over the last year and just wanted to do a quick comparison to Lightroom 5's NR features. No I haven't bought Lightroom 6 yet, I barely use it as is so sinking more money into it seems like a poor decision.

First, comparing a couple of well lit shots at ISO 1000 from the X100s.

Image with no noise reduction

Image with no noise reduction

Darktable profiled noise reduction, settings in image

Darktable profiled noise reduction, settings in image

Lightroom 5 noise reduction, settings in image

Lightroom 5 noise reduction, settings in image

There isn't a lot of noise at ISO 1000 so either program really does an OK job. Darktable seems to preserve more details but at the cost of some muddy colors and artifacts in places. Lightroom's output is smoother. I'm not sure this is the noise reduction or just differences in RAW processing and camera profiles, the screen captures exaggerate it some as well.

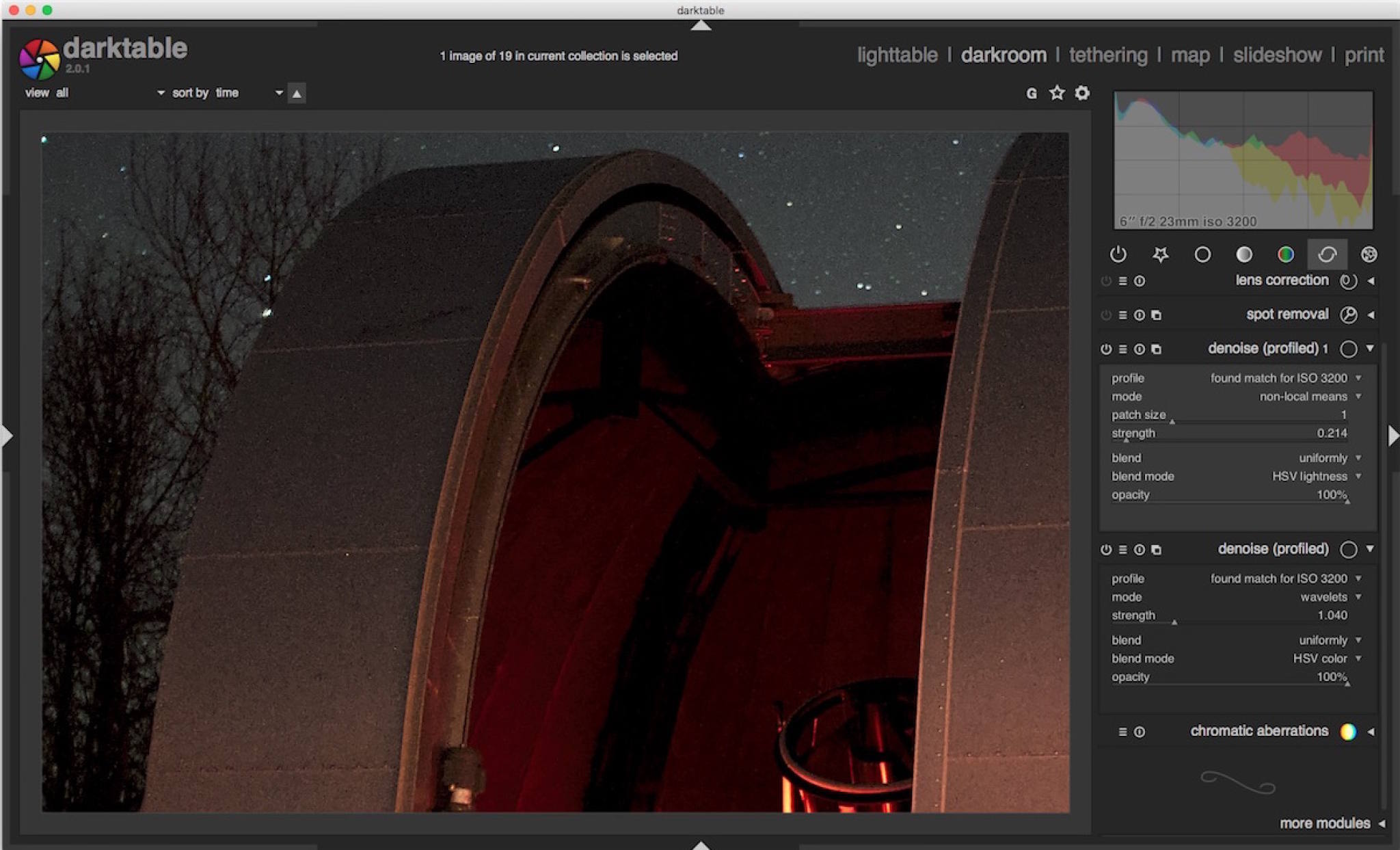

Next some rather dark photos from the Dark Sky Observatory. X100s at ISO 3200. Darktable's profiled noise reduction takes a fair bit more tweaking to get satisfactory results. I've taken the approach advised by Robert Hutton and done two passes of the module. The first set to wavelets mode, blending uniformly for HSV color to remove the color noise. This Darktable handles quite well. Where it runs into problems (like with the other images) is luminance noise.

ISO 3200 no noise reduction

ISO 3200 no noise reduction

Darktable color only noise reduction

Darktable color only noise reduction

Darktable color and luminance noise reduction

Darktable color and luminance noise reduction

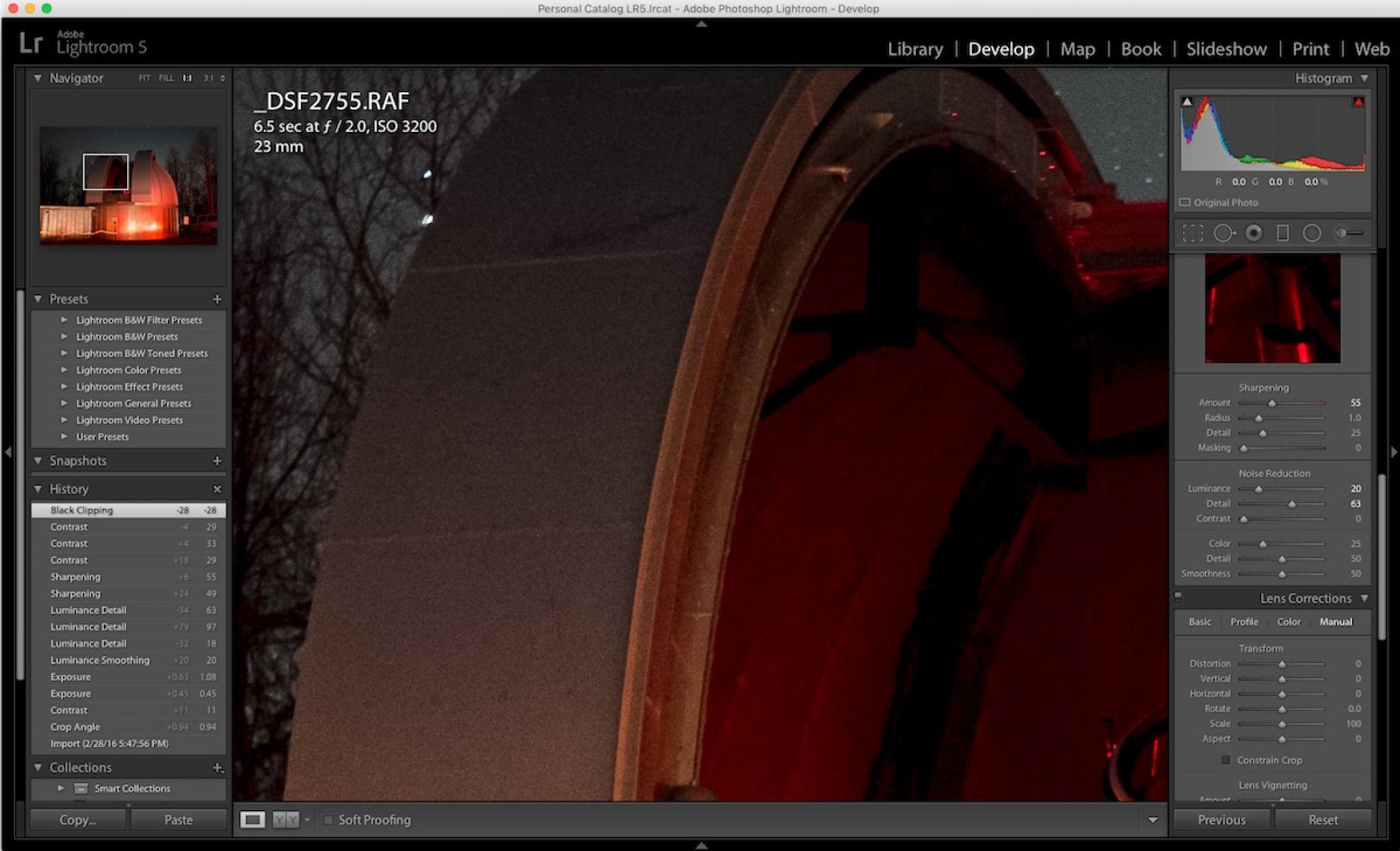

Lightroom noise reduction

Lightroom noise reduction

After comparing the darker shots you can see where Lightroom pulls out in front with luminance smoothing. More detail is lost but the image is generally better IMO. Darktbale's profiled denoise on a HSV lightness layer produces very blocky results, it kind of reminds me of Noise Ninja's output from 2007 or so. It's especially noticeable inside the dome where there's a very patchy pattern in the red light. If you crank up the strength much more the pattern becomes very pronounced. Darktable does do a great job on the color noise removal however. All in all it's not terrible results.

Now, in all fairness Lightroom has been around around twice as long as Darktable and costs a whole lot more. The fact that you can get good results out of a free open source program is pretty incredible. Lightroom was pretty terrible at high ISO NR until version 3 too. Likewise I suspect future versions of Darktable will improve tremendously. But if you're doing a whole lot of shooting at higher ISOs a standalone noise reduction plugin might be a better bet. On OS X it's pretty easy to export the RAW to something like DFine and bring it back into Darktable if you're that picky.

Apollo 10 "Music"

Apparently there was a special on TV about music being heard by the Apollo 10 astronauts while coming around the far side of the moon. Conspiracy theorists had a field day with this as have the usual array of Facebook pages that poorly understand science and engineering. As is the case with most things explanation for the noise is a bit more mundane than aliens.

For the uninitiated NASA has posted an MP3 of the recording. There is a lot of noise from equipment, probably some of the whine is due to power inverters but at around the 2:40 mark you can faintly hear some noise. Personally I wouldn't call it music but whatever. You can hear the lunar module pilot Gene Cernan commenting on it.

If you ask me it sounds like pretty standard feedback or interference from local electronics. The command and lunar modules used VHF radios for communication which are pretty susceptible to interference from a wide range of appliances, inverters, lights, etc. Put your WiFi router near a leaky old microwave and see how far the range gets cut for a demostration of this interference. Apollo 11 reported the same noise, but once the LM touched down Collins reported the woo-woo noises ceased. To me that sounds like some sort of ground issue or feedback, not aliens. Just the sheer amount of noise in that recording would lead me to think it was just some leaky electronics or feedback.

As to the classification of these recordings lending credence to the aliens theory: it was the middle of the Cold War, everything was classified outside of school lunch menus.

Turns out space is a pretty noisy place in the radio part of the spectrum. The gas giant planets have very active magnetic fileds and are very loud radio sources. Cassini has provided us with "sounds" from Jupiter and Saturn. They're kind of creepy too:

So, it's not aliens or a government cover up. With most people these days using digital methods of telecommunication that are relatively noise free these sort of things may seem strange. However noise and interference are common to analog radio signals. Anyone else remember static on the TV or radio stations fading in and out with certain weather conditions? It's the same sort of stuff here.

Linux Photography V: GIMP

I've been putting off writing this because I kept changing how I wanted to approach the end of this little series. The longer I waited the more comfortable I became with the tools and techniques and the more my work flow changed. While I plan on hitting the high points here this is still an ever evolving process. I'm making progress though and I find myself jumping back into the Adobe-sphere less and less.

GIMP has come a long way in the last few years. It actually beat Photoshop to the content aware tools by a year or so with Resynthesizer. Resynthesizer works great too and I've been making good use out of it. However GIMP has lagged behind elsewhere though, most notably in handling higher bit depth files. That changed recently in GIMP 2.9.2 but that's still the development branch and won't be in the main release until version 2.10. Luckily a couple of developers manage to package it for the popular Debian based distributions through a PPA. There are also Mac and Windows builds of the GIMP development version out there that work well too.

Before GIMP 2.9.2 I really only used it for files that were only bound for the web. Being limited to 8-bit files kept me away from it for heavy-duty print bound stuff. To be honest it probably doesn't make that much difference but it's still nice to have all of the data available from my camera's RAW files there. I usually save out final edits as 16-bit TIFFs (remember XCFs are like PSDs not really "final product" type format) and export JPEGs from Darktable.

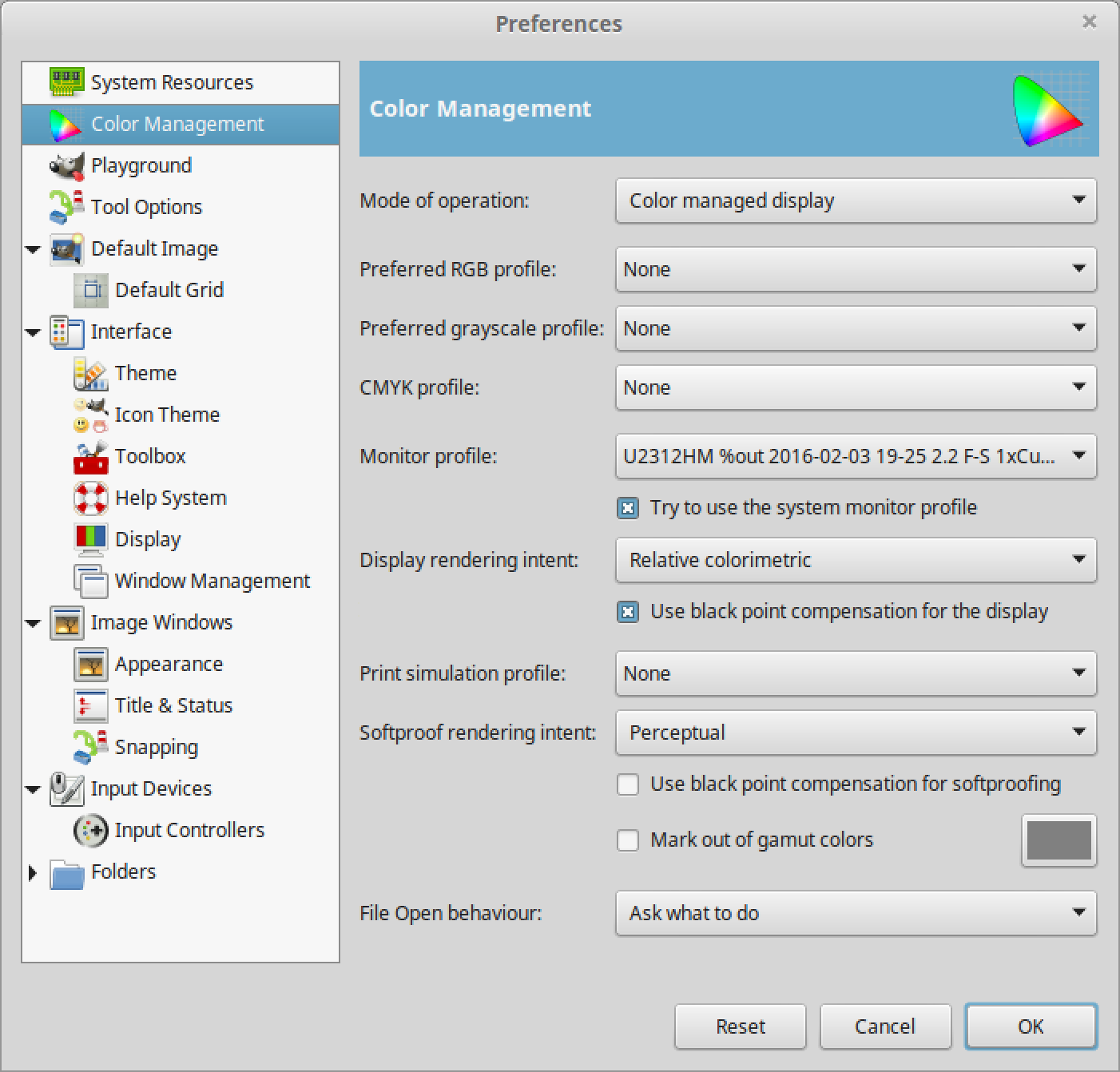

Some photographers like to swap the GIMP keyboard shortcuts for my Photoshop-esque ones. I tend to keep the defaults as I don't spend a lot of time in GIMP (or Photoshop for that matter) and I'm used to platform hopping and remembering different key bindings. YMMV. I do put GIMP into single window mode (View -> Single Window Mode) to make my screen a little less cluttered. Also make sure GIMP is set to use your monitor's color profile under Edit -> Preferences -> Color Management).

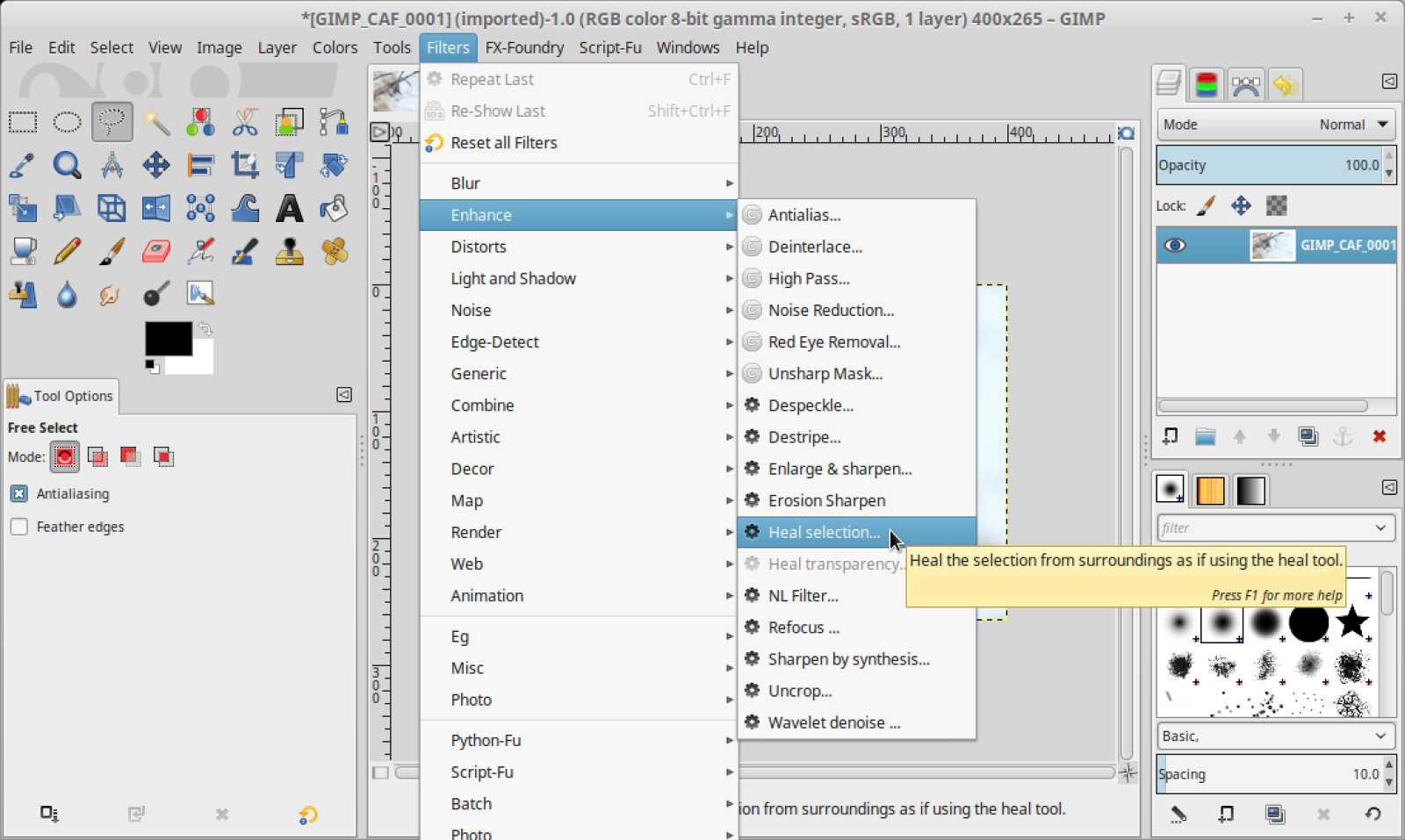

Make sure you get the plugins installed. The McGIMP version comes like this automatically and in Debian/Ubuntu/Mint you'll need to install the gimp-plugin-registry. The PPA with GIMP 2.9 has that too. This way you get the Resynthesizer plugin which is ultra useful. Just select an area you want to nuke, go to Filter -> Enhance -> Heal selection and let it do its thing. It seems to work at least as well as Photoshop CS6's version.

GIMP gets the job done for my pixel editing needs. I'm not a graphic designer type so I really only use Photoshop for removing unwanted elements from a frame, touch ups and some very basic compositing. That type of work certainly doesn't need the latest version of CC (nor its recurring costs or QA problems). I suspect most photographers really only use about 5-10% of Photoshop's capability too.

Perhaps the most irritating thing about the experience is the lack of capability for Darktable to "round trip" like Lightroom or Capture One can do. Unfortunately this is due to technical issues. It would be great if you could specify a list of external editors and have Darktable automatically create a TIFF file with the edits applied and send it to GIMP. As it stands now you have to export it through the Lighttable module, open it in GIMP and reimport the file into Darktable. If you have to touch up a whole group of images in GIMP that can really slow things down.

GIMP is available for pretty much any platform out there, including Windows. It's pretty handy to have around. Just make sure you grab the 2.9 version for the 16 and 32-bit image support. It's also a little faster in my testing. Don't be scared by the warning messages about it being a development version.

So far it's been more or less pretty straight forward to have a photography work flow that works in Linux. Most of this software works on OS X too. I actually use Darktable and GIMP on my MacBook Pro regularly. So even if you're not comfortable with installing Linux you can still have a more open work flow.

Breaking Down the LIGO Announcement

Today I get to put on my physicist hat and talk about a major discovery. Admittedly the aforementioned hat is a little dusty but I'm just excited I get to use those fancy physics degrees of mine for a minute. There may be some of my old professors reading this and to them I profusely apologize and I really hope I didn't screw the explanation up too much.

Anyway, around 100 years ago there was this fellow named Einstein who had funny ideas about gravity and things that moved sufficiently fast. In 1915 Einstein published his General Theory of Relativity which dealt with gravity, basically it said that spacetime was deformable and what we measure as gravity is due to mass distorting that structure. Because of something called the Lorentz invariance of general relativity we had put a speed limit on propagation of information, this included gravity. This also broke with Newtonian physics as Newton had assumed the information from a gravitational interaction was transmitted instantaneously. Out of this fell the prediction of gravity waves. It seemed to make sense conceptually too (at least to me) given the physical construct of gravity general relativity was proposing.

Hypotheses are nice but as Warnher Von Braun once said "one test result is worth one thousand expert opinions." Fast forward around 100 years. Turns out Albert was right about a lot of things. We've measured the deflection of starlight around the sun during a total eclipse, time dilation as predicted by special relativity and dealt with the precession of the perihelion of Mercury with his theories. Over the years relativity has held up to scrutiny, so it's earned the right to be called a theory. Theory has a different denotation for scientists.

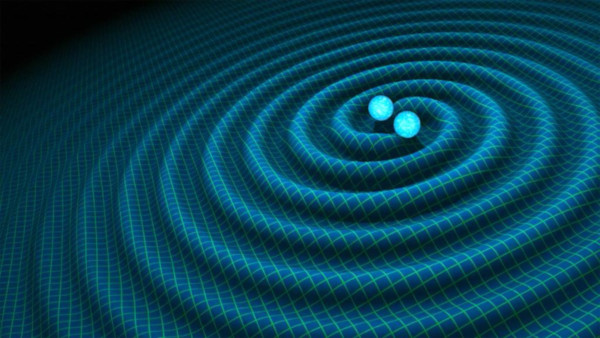

This week the team at LIGO announced they had successfully detected gravity waves from colliding black holes. Now we can add gravity waves to the things Einstein was right about. Great. So what does that mean? Well, first off this is a direct observation of binary black holes. Previously we had relied on observations of the space around black holes. The math said singularities should exist, but because we can't observe or measure anything directly from them (anything within the event horizon would have to break the speed of light, an Einsteinian no-no, to reach us) we've relied on watching what they do to the stuff around them.

Visualization of gravity waves produced by black holes spiraling into each other.

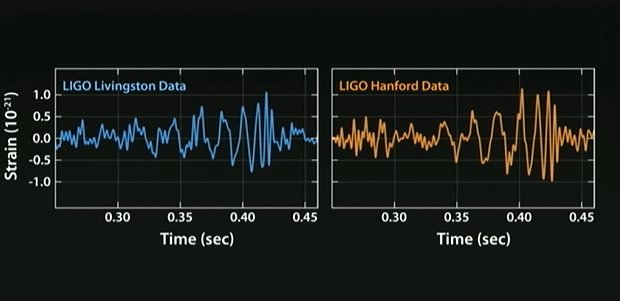

However gravity waves give us a back door of sorts into direct observations. When two massive bodies spiral into each other like that they produce changes in the curvature of spacetime that propagate out in a wavelike fashion. Put another way the very thing everything is resting in is distorted in a rhythmic pattern and that pattern travels at the speed of light out in every direction from said event. LIGO measures this with a couple observatories, each have L shaped detectors that are 4 km long on each side. The fine folks over at LIGO fire a laser down each leg and reflect it back and look at the interference pattern. When the spacetime distortion happens the pattern between the two lasers changes and the wave can be measured. This is a gross simplification but you should get the idea. There are two observatories so they can corroborate their results as repeatability and verification is important in science.

Gravity waves as detected by LIGO

That leads us to important part number two. This discovery pretty much opens a whole new realm of observational astronomy and science. Now you can have radio telescopes, optical telescopes and gravity telescopes to take measurements of distant objects. I imagine we'll be able to use gravity wave measurements to figure out more accurate masses of these objects, the energies involved in these collisions and so on. This discovery is on par with Galileo's first telescope. Indeed LIGO is to gravity astronomy what that glass filled tube is to optical astronomy. Better instruments will allow for better data down the line and further testing of Einstein's theories.

Just like electromagnetic waves there is an entire spectrum of gravity waves to investigate. The masses and energies involved will dictate the frequency and amplitude of the waves along with the size of the detector needed to see it. Some gravity waves will take laser interferometers bigger than we can build here on Earth to detect. The closest analog is when Herschel discovered infrared and that the spectrum extended beyond the visible range. Pretty much everything we know about the universe comes from observations of the EM spectrum or particles interacting with detectors here on Earth. We now have another physical quantity to measure. It's like only being able to describe the make and model of a car and now suddenly you can also describe its color. Until the LIGO team's discovery we were basically blind to a fundamental physical interaction.

Does this mean that Einstein had some sort of ultimate theory? Nope. In fact the discovery of gravity waves will allow us to further stretch and test his math. He was really good at math by the way, that thing about him failing it is an urban legend to make you feel better about your own inability. Newton was shown to have a universal theory of gravitation until it failed to explain some phenomena. Today we have problems reconciling Einstein's relativity with some principles in quantum mechanics so it's not without its problems. This is conjecture but it's probable that relativity is a subset of another set of physical laws we not aware of. Newton's theory of gravity actually falls out of the math from relativity so there is precedence for such an idea. That is Newton's gravity are applicable to specific scenarios where as Einstein's are more general. So it stands to guess that there may be an even more general set of theories we don't know about yet. I don't want to bash Newton too much. We still use his laws to put probes around other planets and the guy basically invented calculus on a dare (eat it Leibniz fans).

Practical, everyday applications? Probably nothing for the foreseeable future. Don't expect Star Trek warp drive, Mass Effect biotics or other science fiction leap out of this either. The keyword there is fiction. Of course applications of this discovery could change as technology advances but I wouldn't hold my breath. I'm sure Einstein didn't envision the digital camera when he discovered the photoelectric effect (what he won the Noble Prize for, not relativity) and now practically the whole world has one at its disposal. In my opinion discovery shouldn't have to put food on the table or justify itself to be worthwhile. It's sad that we live in a world where simple curiosity isn't rewarded, but I digress. A greater understanding of the universe is a reward in itself. Well, back to my day gig of making sure college students can still get to Facebook.